The Sugar Act of 1764: The Tax Cut That Sparked a Revolution

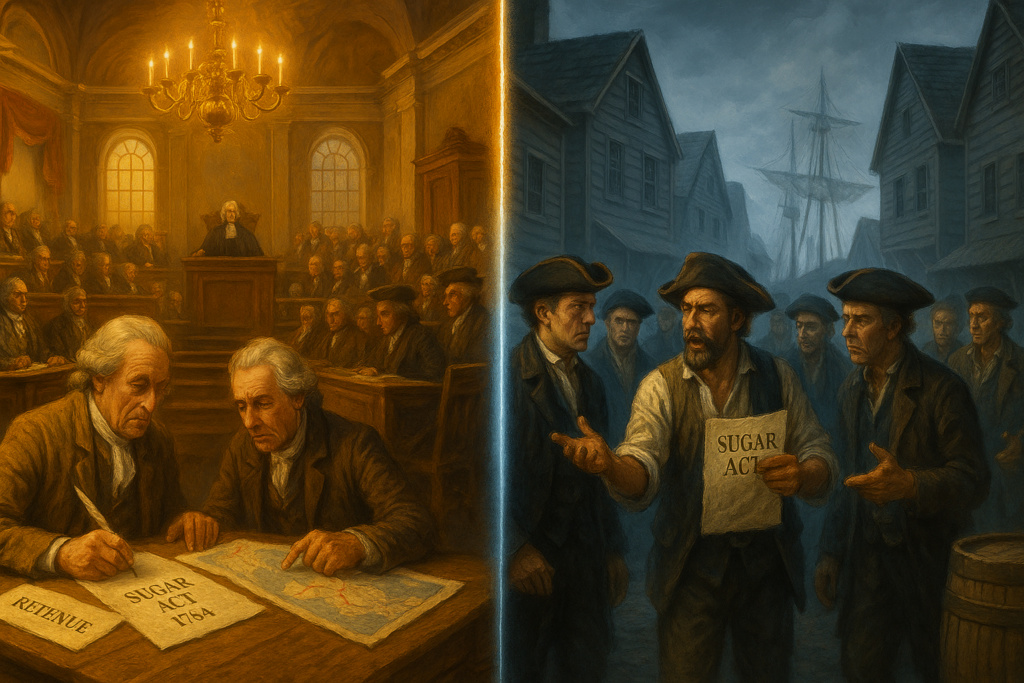

Imagine a time when people rose up in protest of a tax being lowered. Welcome to the world of the Sugar Act.

The Sugar Act of 1764 stands as one of the most ironic moments in the history of taxation. Here Britain was actually lowering a tax, and yet colonists reacted with a fury that would help spark a revolution. To understanding this paradox, we must understand that this new act represented something far more threatening than any previous attempt by Britain to regulate its American colonies.

The Old System: Benign Neglect

For decades before 1764, Britain had maintained what historians call “salutary neglect” toward its American colonies. The Molasses Act of 1733 had imposed a steep duty of six pence per gallon on foreign molasses imported into the colonies. On paper, this seemed like a significant burden for the rum-distilling industry, which depended heavily on cheap molasses from French and Spanish Caribbean islands. In practice, though, the tax was rarely collected. Colonial merchants either bribed customs officials or simply smuggled the molasses past them. The British government essentially looked the other way, and everyone profited.

This informal arrangement worked because Britain’s primary interest in the colonies was commercial, not fiscal. The Navigation Acts required colonists to ship certain goods only to Britain and to buy manufactured goods from British merchants, which enriched British traders and manufacturers without requiring aggressive tax collection in America. As long as this system funneled wealth toward London, Parliament didn’t care much about collecting relatively small customs duties across the Atlantic.

Everything Changed in 1763

The Seven Years’ War (which Americans call the French and Indian War) changed this comfortable arrangement entirely. Britain won decisively, driving France out of North America and gaining vast new territories. But victory came with a staggering price tag. Britain’s national debt had nearly doubled to £130 million, and annual interest payments alone consumed half the government’s budget. Meanwhile, Britain now needed to maintain 10,000 troops in North America to defend its expanded empire and manage relations with Native American tribes.

Prime Minister George Grenville faced a political problem. British taxpayers, already heavily burdened, were in no mood for additional taxes. The logic seemed obvious: since the colonies had benefited from the war’s outcome and still required military protection, they should help pay for their own defense. Americans, paid far lower taxes than their counterparts in Britain—by some estimates, British residents paid 26 times more per capita in taxes than colonists did.

What the Act Actually Did

The Sugar Act (officially the American Revenue Act of 1764) approached colonial taxation differently than anything before it. First, it cut the duty on foreign molasses from six pence to three pence per gallon—a 50% reduction. Grenville calculated, reasonably, that merchants might actually pay a three-pence duty rather than risk getting caught smuggling, whereas the six-pence duty had been so high it encouraged universal evasion.

But the Act did far more than adjust molasses duties. It added or increased duties on foreign textiles, coffee, indigo, and wine imported into the colonies. colonialtened regulations around the colonial lumber trade and banned the import of foreign rum entirely. Most significantly, the Act included elaborate provisions designed to strictly enforce these duties for the first time.

The enforcement mechanisms represented the real revolution in British policy. Ship captains now had to post bonds before loading cargo and had to maintain detailed written cargo lists. Naval patrols increased dramatically. Smugglers faced having their ships and cargo seized.

Significantly, the burden of proof was shifted to the accused. They were required to prove their innocence, a reversal of traditional British justice. Most controversially, accused smugglers would be tried in vice-admiralty courts, which had no juries and whose judges received a cut of any fines levied.

The Paradox of the Lower Tax

So why did colonists react so angrily to a tax cut? The answer reveals the fundamental shift in the British-American relationship that the Sugar Act represented.

First, the issue wasn’t the tax rate. It was the certainty of collection. A six-pence tax that no one paid was infinitely preferable to a three-pence tax rigorously enforced. New England’s rum distilling industry, which employed thousands of distillery workers and sailors, depended on cheap molasses from the French West Indies. Even at three pence per gallon, the tax significantly increased operating costs. Many merchants calculated they couldn’t remain profitable if they had to pay it.

Second, and more importantly, colonists recognized that the Act’s purpose had changed relationships. Previous trade regulations, even if they involved taxes, were ostensibly about regulating commerce within the empire. The Sugar Act openly stated its purpose was raising revenue—the preamble declared it was “just and necessary that a revenue be raised” in America. This might seem like a technical distinction, but to colonists it mattered enormously. British constitutional theory held that subjects could only be taxed by their own elected representatives. Colonists elected representatives to their own assemblies but sent no representatives to Parliament. Trade regulations fell under Parliament’s legitimate authority to govern imperial commerce, but taxation for revenue was something else entirely.

Third, the enforcement mechanisms offended colonial sensibilities about justice and traditional British rights. The vice-admiralty courts denied jury trials, which colonists viewed as a fundamental right of British subjects. Having to prove your innocence rather than being presumed innocent violated another core principle. Customs officials and judges profiting from convictions created obvious incentives for abuse.

Implementation and Colonial Response

The Act took effect in September 1764, and Grenville paired it with an aggressive enforcement campaign. The Royal Navy assigned 27 ships to patrol American waters. Britain appointed new customs officials and gave them instructions to strictly do their jobs rather than accept bribes. Admiralty courts in Halifax, Nova Scotia became particularly notorious. Colonists had to travel hundreds of miles to defend themselves in a court with no jury and with a judge whose income game from convictions.

Colonists responded immediately. Boston merchants drafted a protest arguing that the act would devastate their trade. They explained that New England’s economy depended on a complex triangular trade: they sold lumber and food to the Caribbean in exchange for molasses, which they distilled into rum, which they sold to Africa for slaves, who were sold to Caribbean plantations for molasses, and the cycle repeated. Taxing molasses would break this chain and impoverish the region.

But the economic arguments quickly evolved into constitutional ones. Lawyer James Otis argued that “taxation without representation is tyranny”—a phrase that would echo through the coming decade. Colonial assemblies began passing resolutions asserting their exclusive right to tax their own constituents. They didn’t deny Parliament’s authority to regulate trade, but they drew a clear line: revenue taxation required representation.

The protests went beyond rhetoric. Colonial merchants organized boycotts of British manufactured goods. Women’s groups pledged to wear homespun cloth rather than buy British textiles. These boycotts caused enough economic pain in Britain that London merchants began lobbying Parliament for relief.

The Road to Revolution

The Sugar Act’s significance extends far beyond its immediate economic impact. It established precedents and patterns that would define the next decade of imperial crisis.

Most fundamentally, it shattered the comfortable arrangement of salutary neglect. Once Britain demonstrated it intended to actively govern and tax the colonies, the relationship could never return to its previous informality. The colonists’ constitutional objections—no taxation without representation, right to jury trials, presumption of innocence—would be repeated with increasing urgency as Parliament passed the Stamp Act (1765), Townshend Acts (1767), and Tea Act (1773).

The Sugar Act also revealed the practical difficulties of governing an empire across 3,000 miles of ocean. The vice-admiralty courts became symbols of distant, unaccountable power. When colonists couldn’t get satisfaction through established legal channels, they increasingly turned to extralegal methods, including committees of correspondence, non-importation agreements, and eventually armed resistance.

Perhaps most importantly, the Sugar Act forced colonists to articulate a political theory that ultimately proved incompatible with continued membership in the British Empire. Once they agreed to the principle that they could only be taxed by their own elected representatives, and that Parliament’s authority over them was limited to trade regulation, the logic led inexorably toward independence. Britain couldn’t accept colonial assemblies as co-equal governing bodies since Parliament claimed supreme authority over all British subjects. The colonists couldn’t accept taxation without representation since they claimed the rights of freeborn Englishmen. These positions couldn’t be reconciled.

The Sugar Act of 1764 represents the point where the British Empire’s century-long success in North America began to unravel. By trying to make the colonies pay a modest share of imperial costs through what seemed like reasonable means, Britain inadvertently set in motion forces that would break the empire apart just twelve years later.

Sources

Mount Vernon Digital Encyclopedia – Sugar Act https://www.mountvernon.org/library/digitalhistory/digital-encyclopedia/article/sugar-act/ Provides overview of the Act’s provisions, economic context, and relationship to British debt from the Seven Years’ War. Includes information on tax burden comparisons between Britain and the colonies.

Britannica – Sugar Act https://www.britannica.com/event/Sugar-Act Covers the specific provisions of the Act, enforcement mechanisms, vice-admiralty courts, and the shift from the Molasses Act of 1733. Useful for technical details of the legislation.

History.com – Sugar Act https://www.history.com/topics/american-revolution/sugar-act Discusses colonial constitutional objections, the “taxation without representation” argument, and the enforcement provisions including burden of proof reversal and jury trial denial.

American Battlefield Trust – Sugar Act https://www.battlefields.org/learn/articles/sugar-act Details colonial response including boycotts, James Otis’s arguments, and the triangular trade system that the Act disrupted.

Additional Recommended Sources

Library of Congress – The Sugar Act https://www.loc.gov/collections/continental-congress-and-constitutional-convention-broadsides/articles-and-essays/continental-congress-broadsides/broadsides-related-to-the-sugar-act/ Primary source collection including contemporary colonial broadsides and protests against the Act.

National Archives – The Sugar Act (Primary Source Text) https://founders.archives.gov/about/Sugar-Act The actual text of the American Revenue Act of 1764, useful for verifying specific provisions and language.

Yale Law School – Avalon Project: Resolutions of the Continental Congress (October 19, 1765) https://avalon.law.yale.edu/18th_century/resolu65.asp Colonial responses to the Sugar and Stamp Acts, showing how the arguments evolved.

Massachusetts Historical Society – James Otis’s Rights of the British Colonies Asserted and Proved (1764) https://www.masshist.org/digitalhistory/revolution/taxation-without-representation Primary source for the “taxation without representation” argument that emerged from Sugar Act opposition.

Colonial Williamsburg Foundation – Sugar Act of 1764 https://www.colonialwilliamsburg.org/learn/deep-dives/sugar-act-1764/ Discusses economic impact on colonial merchants and the rum distilling industry.

Bull Markets, Bear Markets and the Story Behind Wall Street’s Most Famous Animals

By John Turley

On January 13, 2026

In Commentary, History

If you’ve ever caught a business news segment, you’ve probably heard anchors throwing around terms like “bull market” and “bear market” as if everyone just naturally knows what they mean. But beyond the basic idea that one’s good and one’s bad, the real mechanics of these market conditions—and how they got their animal nicknames—are pretty interesting.

How the Stock Market Works (The Quick Version)

Before we dive into bulls and bears, let’s cover the basics. The stock market is essentially a place where people buy and sell ownership stakes in companies. When you buy a share of stock, you’re purchasing a tiny piece of that company. The price of that share goes up or down based on how many people want to buy it versus how many want to sell it—classic supply and demand.

Companies sell shares to raise money for growth, and investors buy them hoping the company will do well and the stock price will increase. The overall “market” is tracked through indexes like the S&P 500 or Dow Jones Industrial Average, which measure how a group of major companies are performing. When most stocks are rising, we say the market is up; when most are falling, the market is down.

What Bull and Bear Markets Actually Mean

A bull market refers to a period when stock prices are rising, typically defined as a 20% or more increase from recent lows. During bull markets, investors are optimistic, companies are generally doing well, and people are more willing to take risks with their money. Bull markets usually are driven by a strong economy with low inflation and optimistic investors. Think of the economic boom of the late 1990s or the recovery after the 2008 financial crisis—those were classic bull markets where prices kept climbing for years.

A bear market is essentially the opposite: a general decline in the stock market over time, usually defined as a 20% or more price decline over at least a two-month period. During bear markets, investors get nervous, sell off their holdings, and pessimism spreads. When a 10% to 20% decline occurs, it’s classified as a correction, and bear territory always precedes a bear market. The Great Depression, the 2008 financial crisis, and the COVID-19 pandemic’s initial impact all triggered bear markets.

The Colorful History Behind the Terms

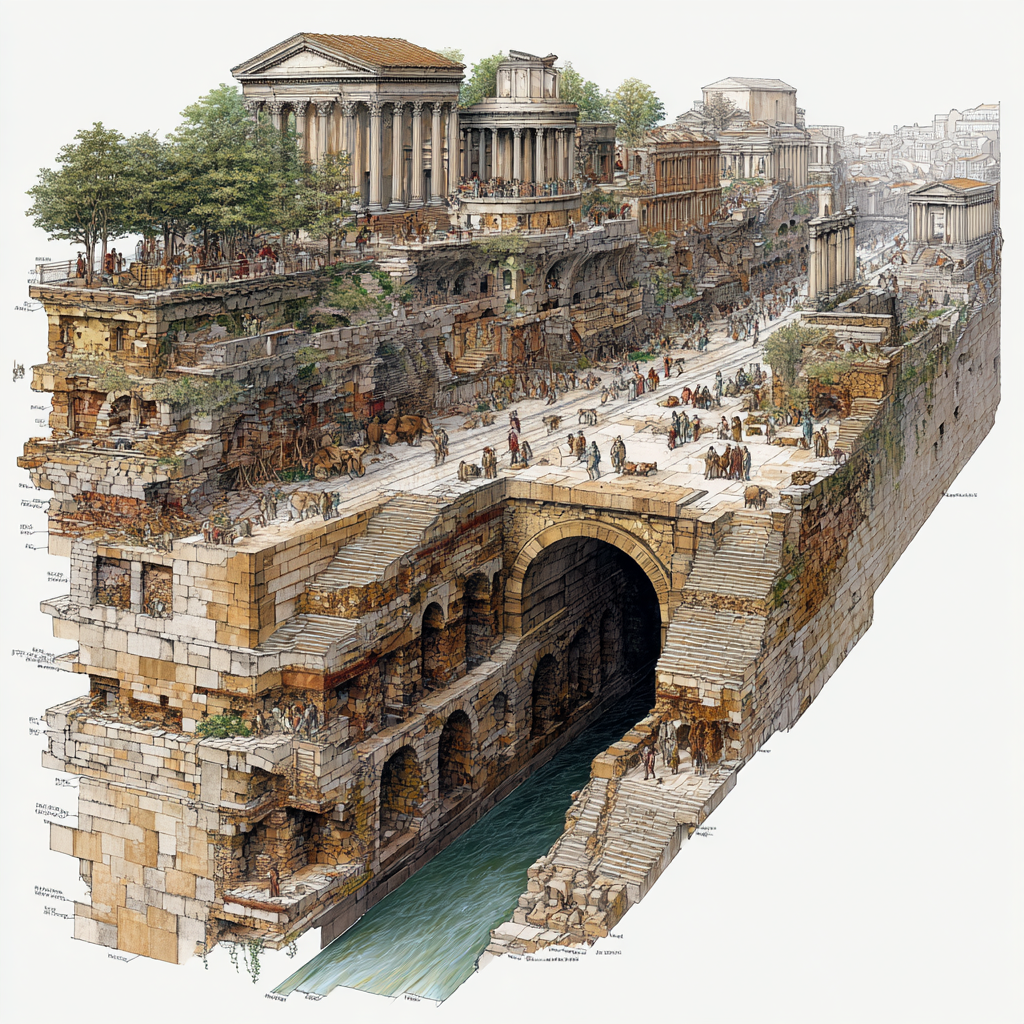

Now here’s where things get interesting. These terms didn’t come from some modern marketing genius—they trace back to 18th century London, and the story involves everything from old proverbs to violent animal fights to one of history’s biggest financial scandals.

The “bear” term came first. Etymologists point to an old proverb, warning that it is not wise “to sell the bear’s skin before one has caught the bear”. This saying was about the foolishness of counting on something before you actually have it. By the early 1700s, traders who engaged in short selling (betting that prices would fall) were called “bear-skin jobbers” because they sold a bear’s skin—the shares—before catching the bear. The term eventually got shortened to just “bears.”

The real watershed moment came with the South Sea Bubble of 1720. The South Sea Company was a British joint stock company founded by an act of Parliament in 1711, and in 1720, the company assumed most of the British national debt and convinced investors to give up state annuities for company stock sold at a very high premium. When everything collapsed, share prices dropped dramatically, starting a “bear market,” and the story became the topic of many literary works and went down in history as an infamous metaphor.

As for “bull,” the origins are a bit murkier. The first known instance of the market term “bull” popped up in 1714, shortly after the “bear” term emerged. Most historians think it arose as a natural counterpoint to “bear,” possibly influenced by bull-baiting and bear-baiting, two animal fighting sports popular at the time—though I should note that’s somewhat speculative.

There’s also a popular explanation about how the animals attack: bears swipe downward with their paws while bulls thrust upward with their horns, which nicely mirrors market movements. While that’s a helpful memory device, it’s probably more of a convenient coincidence or a retroactive description than the actual origin. The term “bull” originally meant a speculative purchase in the expectation that stock prices would rise, and was later applied to the person making such purchases.

Why This Still Matters

These metaphors have stuck around for three centuries because they work. They’re visceral and easy to remember—you can picture a charging bull or a hibernating bear without needing an economics degree. They also capture something real about market psychology: the aggressive optimism of bulls pushing prices up versus the defensive pessimism of bears hunkering down.

Understanding these terms helps you follow financial news and, more importantly, recognize when markets are shifting. Knowing you’re in a bull market might make you less surprised by rising prices, while recognizing a bear market can help you avoid panic-selling when things look grim.

The Bull and Bear Markets are among those things I’ve heard for years and never knew their origin. This article is an attempt to explain it to myself.

Sources: