What’s Really Happening in Your Brain

You’re probably multitasking right now. Maybe you’re reading this with a podcast playing in the background, or you’ve got three browser tabs open and you’re checking your phone every few minutes. We all do it. We even brag about it on resumes: “Excellent multitasker!” But here’s the uncomfortable truth that neuroscience has been trying to tell us for years—what we call multitasking is mostly an illusion.

What People Actually Mean

When most people say they’re multitasking, they’re describing one of two scenarios. The first is doing multiple automatic activities simultaneously—like walking while talking, or listening to music while folding laundry. The second, and the one that gets more interesting, is rapidly switching attention between different demanding tasks—like answering emails while on a conference call, or texting while watching TV.

The distinction matters because our brains handle these situations very differently. Activities that have become automatic through practice don’t require much conscious attention. You can absolutely walk and chew gum at the same time because neither activity demands your prefrontal cortex’s full attention. But when both tasks require active thinking and decision-making? That’s where things get complicated.

The Brain’s Bottleneck

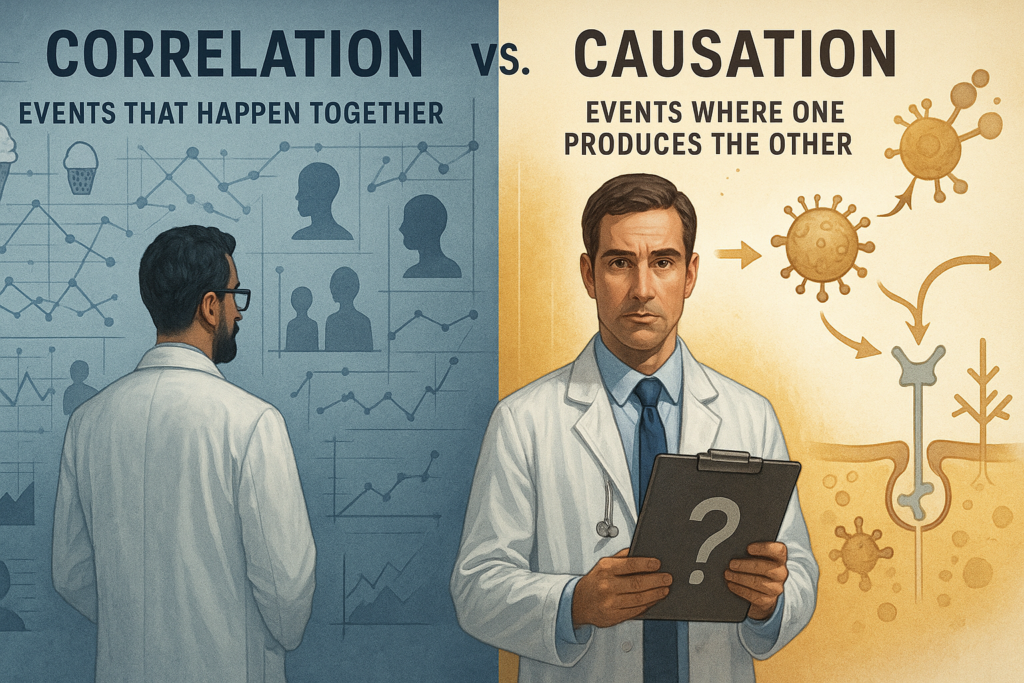

Here’s what neuroscience tells us: true multitasking—simultaneously processing multiple streams of complex information—is essentially impossible for the human brain. What feels like multitasking is actually rapid task-switching, and your brain pays a price every time it makes that switch.

The limitation comes from something researchers call the “response selection bottleneck.” When you’re performing tasks that require conscious thought, they all funnel through the same neural pathways in your prefrontal cortex. This region can only process one demanding task at a time, so when you think you’re doing two things at once, you’re really just toggling between them very quickly.

Studies using functional MRI brain imaging have shown what happens during this switching process. When people attempt to multitask, researchers observe reduced activity in the regions responsible for each individual task compared to when those tasks are done separately. Your brain literally can’t devote full processing power to both activities simultaneously.

The Switching Cost

Every time you switch from one task to another, there’s a cognitive cost. Your brain needs to disengage from the first task, shift attention, and then reorient to the new task. This happens so quickly—sometimes in tenths of a second—that we don’t consciously notice it. But those microseconds add up.

Sorry, but get ready for some doctor talk. When people switch tasks, imaging studies show increased activation in frontoparietal control and dorsal attention networks, especially in prefrontal regions (like the inferior frontal junction) and parietal cortex (such as intraparietal sulcus). This boosted activity reflects the brain dropping one task set, loading another into working memory, and re‑orienting attention—processes that consume time and neural resources.

Over time, practice can make specific tasks more automatic, reducing average activity in these control networks and allowing smoother coordination of tasks. However, even in trained multitaskers, studies still find evidence for serial queuing of operations in the multiple‑demand frontoparietal network, reinforcing the idea that consciously doing multiple demanding things “at once” is extremely limited.

Research from Stanford University found that people who regularly engage in heavy media multitasking actually perform worse at filtering out irrelevant information and switching between tasks than people who focus on one thing at a time. Essentially, chronic multitaskers become worse at the very thing they practice most.

Even when people train extensively, studies indicate they mainly become faster at switching and coordinating, not truly doing two demanding tasks at once. Experimental work using reaction‑time paradigms shows a reliable “switch cost”: when people change tasks, responses get slower and more error‑prone compared to staying with one task. This cost is one of the strongest signs that most human “multitasking” is serial switching under time pressure rather than genuine simultaneous processing.

The American Psychological Association reports that these mental blocks created by switching between tasks can cost up to 40% of productive time. Think about that for a minute—nearly half your work time potentially lost to the mechanics of jumping between activities.

The Attention Residue Problem

There’s another wrinkle that makes multitasking even less efficient. When you switch away from a task before completing it, part of your attention remains stuck on the unfinished work. Researchers call this “attention residue,” and it reduces your cognitive performance on the next task.

Sophie Leroy, a business professor at the University of Washington, demonstrated this effect in a series of studies. People who switched tasks performed significantly worse on the second task than people who finished the first task before moving on. The unfinished task keeps running in your mental background, using up cognitive resources you need for the new activity.

When “Multitasking” Actually Works

There are legitimate exceptions to the no-multitasking rule, but they’re more limited than most people think. You can successfully combine activities when at least one of them is so well-practiced that it’s become automatic—essentially requiring no conscious thought. You can listen to an audiobook while jogging because your body handles the running on autopilot.

Some research also suggests that certain types of background music or ambient noise can enhance performance on creative tasks, though this seems to work best when the music is familiar and lacks lyrics that compete with language-processing tasks.

Why We Keep Trying

If multitasking is so inefficient, why do we persist? Part of the answer lies in how it feels. Task-switching triggers the release of dopamine, the brain’s reward chemical. Every time you check your phone or switch to a new browser tab, you get a little neurochemical hit. It feels productive, even when it isn’t.

There’s also a cultural element. We live in an attention economy where being constantly connected and responsive feels mandatory. Focusing on one thing can feel like you’re missing out or falling behind, even though the research consistently shows that single-tasking produces better results faster.

It’s worth noting that research consistently shows this gap between perception and performance. People who think they are excellent multitaskers tend to be the worst at it.

The Bottom Line

The evidence is pretty clear: what we call multitasking is really task-switching, and it makes us slower and more error-prone at both activities. Your brain has a fundamental processing limitation that hasn’t changed despite our increasingly multi-screen world. The prefrontal cortex can only fully engage with one complex task at a time, and switching between tasks creates cognitive costs that add up to significant lost productivity and increased mistakes.

This doesn’t mean you should never listen to music while working or that walking while talking will melt your brain. But when you’re doing something that really matters—writing an important email, having a meaningful conversation, learning something new—giving it your full attention will always produce better results than splitting your focus.

Merry Christmas from The Grumpy Doc

By John Turley

On December 24, 2025

In Commentary