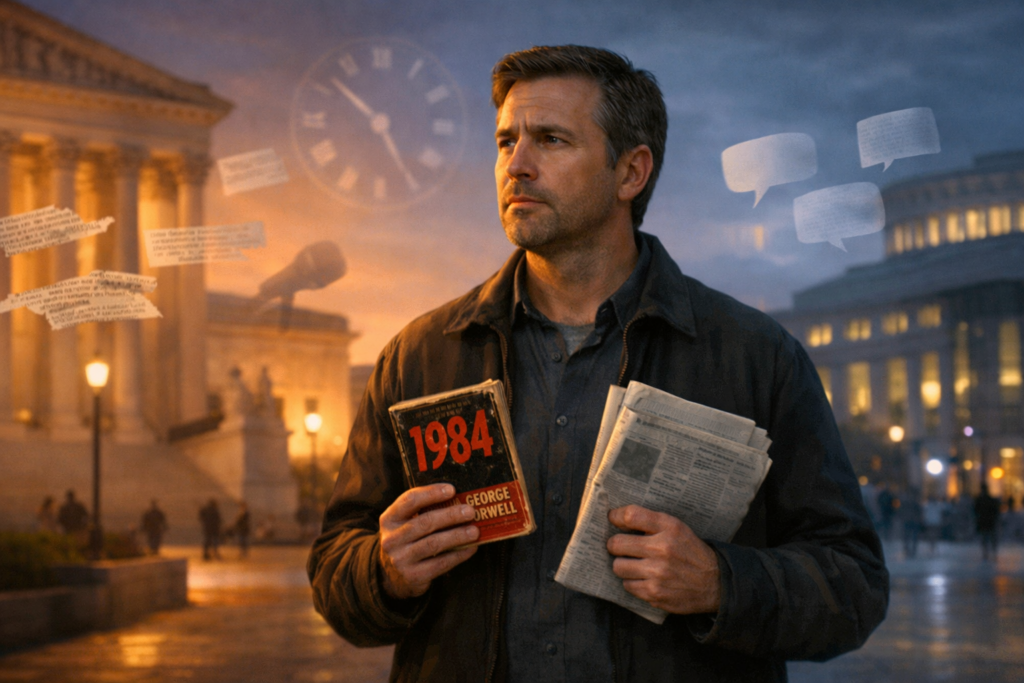

Were it left to me to decide whether we should have a government without newspapers, or newspapers without a government, I should not hesitate a moment to prefer the latter. But I should mean that every man should receive those papers and be capable of reading them. —Thomas Jefferson

I originally posted this article about a year and a half ago. I was concerned about the future of newspapers then and I’m even more concerned now. I’ve updated my original post to reflect recent losses of newspapers.

When I was growing up in Charleston WV in the 1950s and early 1960s, we had two daily newspapers. The Gazette was delivered in the morning and the Daily Mail was delivered in the afternoon. One of my first jobs as a boy was delivering The Gazette. It worked out to be about 50 cents an hour, but I was glad to have the job. (It was good money at the time.)

Ostensibly, the Gazette was a Democratic newspaper, and the Daily Mail was a Republican one. However, given the politics of the day there was not a significant difference between the two, and most people subscribed to both.

There weren’t a lot of options for news at the time. Of course, there were no 24-hour news channels. National news on the three networks was about 30 minutes an evening with local news at about 15 minutes. By the late 1960s national news had increased to 60 minutes and most local news to about 30 minutes. Although, given the limitations of time on the local stations, most of the broadcast was taken up with weather, sports, and human interest stories with little time left to expand on hard news stories.

We depended on our newspapers for news of our cities, counties, and states. And the newspapers delivered the news we needed. Almost everyone subscribed to and read the local papers. They kept us informed about our local politicians and government and provided local insight on national events. They were also our source for information about births, deaths, marriages, high school graduations and everything we wanted to know about our community.

In the 21st century there are many more supposed news options. There are 24-hour news networks as I’ve talked about in a previous post. And of course, there are Instagram, Facebook, X and the other online entities that claim to provide news.

There has been one positive development in television news. Local news, at least in Charleston, has expanded to two hours most evenings. There is some repetition between the first and second hour and it is still heavily weighted to sports, weather, and human interest, but there is some increased coverage of local hard news. However, this is somewhat akin to reading the headlines and the first paragraph in a newspaper story. It doesn’t provide in-depth coverage, but it is improved over what otherwise is available to those who don’t watch a dedicated news show. Hopefully, it motivates people to find out more about events that concern them.

The situation has become dire in recent months. The crisis that was building when I first wrote about newspapers has now reached catastrophic proportions. On December 31, 2025, the Atlanta Journal-Constitution published its last print edition after 157 years, making Atlanta the largest U.S. metro area without a printed daily newspaper. Think about that—a major American city, home to over six million people in its metro area, now has no physical newspaper you can hold in your hands.

Just weeks ago in February 2025, the Newark Star-Ledger, New Jersey’s largest newspaper, stopped printing after nearly 200 years. The Jersey Journal, which had served Hudson County for 157 years, closed entirely. These weren’t small-town weeklies—these were major metropolitan dailies that once served millions of readers. The Pittsburgh Post Gazette, founded in 1786, has announced that it will cease publication effective May 3, 2026.

Even more alarming is what just happened at the Washington Post. Just days ago, in early February 2026, owner Jeff Bezos ordered the elimination of roughly one-third of the newspaper’s workforce—approximately 300 journalists. The Post closed its entire sports section, shuttered its books department, gutted its foreign bureaus and metro desk, and canceled its flagship daily podcast. This is the same newspaper that brought down a presidency with its Watergate coverage and has won dozens of Pulitzer Prizes. The Post’s metro desk, which once had 40 reporters covering the nation’s capital, now has just a dozen. All the paper’s photojournalists were laid off. The entire Middle East team was eliminated.

Former Washington Post executive editor Martin Baron, who led the paper from 2013 to 2021, called the cuts devastating and blamed poor management decisions, including Bezos’s decision to spike the newspaper’s presidential endorsement in 2024, which led to the cancellation of hundreds of thousands of subscriptions. The Post lost an estimated $100 million in 2024.

The numbers tell a grim story. Since 2005, more than 3,200 newspapers have closed in the United States—that’s over one-third of all the newspapers that existed just twenty years ago. Newspapers continue to disappear at a rate of more than two per week. In the past year alone, 136 newspapers shut their doors.

Fewer than 5,600 newspapers now remain in America, and less than 1,000 of those are dailies. Even among those “dailies,” more than 80 percent print fewer than seven days a week. We now have 213 counties that are complete “news deserts”—places with no local news source at all. Another 1,524 counties have only one remaining news source, usually a struggling weekly newspaper. Taken together, about 50 million Americans now have limited or no access to local news.

Will TV news be able to provide the details about our community? The format of the newspaper allows for more detailed presentations and for a larger variety of stories. The reader can pick which stories to read, when to read them and how much of each to read. The very nature of broadcast news doesn’t allow these options.

I beg everyone to please subscribe to your local newspapers if you still have one. Though I still prefer the hands-on, physical newspaper, I understand many people want to keep up with the digital age. If you do, please subscribe to the digital editions of your local newspaper and don’t pretend that the other online sources, such as social media, will provide you with local news. More likely, you’ll just get gossip, or worse.

If we lose our local news, we are in danger of losing our freedom of information and if we lose that, we’re in danger of losing our country. For those of you who think I’m fear mongering, countries that have succumbed to dictatorship have first lost their free press.

I believe that broadcast news will never be the free press that print journalism is. The broadcast is an ethereal thing. You hear it and it’s gone. Of course, it is always possible to record it and play it back, but most people don’t. If you have a newspaper, you can read it, think about it, and read it again. There are times when on my second or third reading of an editorial or an op-ed article, I’ve changed my opinion about either the subject or the writer of the piece. I don’t think a news broadcast lends itself to this type of reflection. In fact, when listening to the broadcast news I often find my mind wandering as something that the broadcaster said sends me in a different direction.

In my opinion, broadcast news is controlled by advertising dollars and viewer ratings. News seems to be treated like any entertainment program, catering to what generates ratings rather than facts. I recognize that this can be the case with newspapers as well, but it seems to me that it’s much easier to detect bias in the written word than in the spoken word. Too often we can get caught up in the emotions of the presenter or in the graphics that accompany the story.

With that in mind, I recommend that if you want unbiased journalism, please support your local newspapers before we lose them. Once they are gone, we will never get them back and we will all be much the poorer as a result.

I will leave you with one last quote.

A free press is the unsleeping guardian of every other right that free men prize; it is the most dangerous foe of tyranny. —Winston Churchill

The only way to preserve freedom is to preserve the free press. Do your part! Subscribe!

And you can quote The Grumpy Doc on that!!!!

Sources

Fortune (August 29, 2025): “Atlanta becomes largest U.S. metro without a printed daily newspaper as Journal-Constitution goes digital”

https://fortune.com/2025/08/29/atlanta-largest-metro-without-printed-newpsaper-digital-journal-constitution/

Northwestern University Medill School (2025): “News deserts hit new high and 50 million have limited access to local news, study finds”

https://www.medill.northwestern.edu/news/2025/news-deserts-hit-new-high-and-50-million-have-limited-access-to-local-news-study-finds.html

NBC News (February 2026): “Washington Post lays off one-third of its newsroom”

https://www.nbcnews.com/business/media/washington-post-layoffs-sports-rcna257354

CNN Business (February 4, 2026): “Jeff Bezos-owned Washington Post conducts widespread layoffs, gutting a third of its staff”

https://www.cnn.com/2026/02/04/media/washington-post-layoffs

Northwestern University Medill Local News Initiative (2024): “The State of Local News Report 2024”

https://localnewsinitiative.northwestern.edu/projects/state-of-local-news/2024/report/

Northwestern University Medill School (2025): “News deserts hit new high and 50 million have limited access to local news, study finds”

https://www.medill.northwestern.edu/news/2025/news-deserts-hit-new-high-and-50-million-have-limited-access-to-local-news-study-finds.htm

Understanding Critical Race Theory: What It Is—and Why It Divides America

By John Turley

On March 2, 2026

In Commentary, History, Politics

When I first started hearing debates about Critical Race Theory, I thought these people can’t possibly be talking about the same thing. There seemed to be no common ground—even the words they were using seemed to have different meanings.

Critical Race Theory (CRT) has become one of the most contested intellectual concepts in contemporary American culture. Originally developed in law schools during the 1970s and 1980s, CRT has evolved into a broad analytical method of examining how race and racism operate in society. Understanding its origins, core principles, and the political debates surrounding it requires examining both its academic foundations and its journey into public consciousness.

Origins and Early Development

Legal scholars who were dissatisfied with the slow pace of racial progress following the Civil Rights Movement laid the groundwork for CRT. The early figures included Derrick Bell, often considered the father of CRT, along with Alan Freeman, Richard Delgado, Kimberlé Crenshaw, and Cheryl Harris. These scholars were frustrated that despite landmark legislation like the Civil Rights Act of 1964 and the Voting Rights Act of 1965, racial inequality persisted across American institutions.

The intellectual roots of CRT can be traced to Critical Legal Studies, a movement that challenged traditional legal scholarship’s claims of objectivity and neutrality. However, CRT scholars felt that Critical Legal Studies failed to adequately address race and racism. They drew inspiration from various sources, including the work of civil rights lawyers like Charles Hamilton Houston, sociological insights about institutional racism, and postmodern critiques of knowledge and power.

Derrick Bell’s groundbreaking work in the 1970s laid crucial foundation. His “interest convergence” theory, presented in his analysis of Brown v. Board of Education, argued that advances in civil rights occur only when they align with white interests. This insight became central to CRT’s understanding of how racial progress unfolds in American society.

Core Elements and Principles

Critical Race Theory encompasses several key tenets that distinguish it from other approaches to studying race and racism.

First, CRT posits that race is not biologically real; it’s a human invention to justify unequal treatment. It also holds that racism is not merely individual prejudice, but a systemic feature of American society embedded in legal, political, and social institutions. This “structural racism” perspective emphasizes how seemingly neutral policies and practices can perpetuate racial inequality.

Second, CRT challenges the traditional civil rights approach that emphasizes color-blindness and incremental reform. Instead, CRT scholars argue that color-blind approaches often mask and perpetuate racial inequities. They advocate for race-conscious policies and a more aggressive approach to dismantling systemic racism.

Third, CRT emphasizes the importance of lived experience in the form of storytelling and narrative. Scholars use personal narratives, historical accounts, and counter-stories to challenge dominant narratives about race and racism. This methodological approach reflects CRT’s belief that experiential knowledge from communities of color provides crucial insights often overlooked by traditional scholarship.

Fourth, CRT introduces the concept of intersectionality, a term coined by legal scholar Kimberlé Crenshaw. This framework examines how multiple forms of identity and oppression—including race, gender, class, and sexuality—intersect and compound each other’s effects.

Finally, CRT is explicitly activist-oriented with a goal of creating new norms of interracial interaction. Unlike purely descriptive academic theories, CRT aims to understand racism in order to eliminate it. This commitment to social transformation distinguishes CRT from more traditional academic approaches.

Evolution and Expansion

Since its origins in legal studies, CRT has expanded into numerous disciplines including education, sociology, political science, and ethnic studies. In education, scholars like Gloria Ladson-Billings and William Tate applied CRT frameworks to understand racial disparities in schooling. This educational application of CRT examines how school policies, curriculum, and practices contribute to achievement gaps and educational inequality.

Conservative Perspectives

Conservative critics of CRT raise several concerns about the theory and its applications. They argue that CRT’s emphasis on systemic racism is overly deterministic and fails to account for individual differences and the significant progress made in racial equality since the Civil Rights era. Many conservatives contend that CRT promotes a victim mentality that undermines personal responsibility and achievement.

From this perspective, CRT’s race-conscious approach is seen as divisive and potentially counterproductive. Critics argue that emphasizing racial differences rather than common humanity perpetuates division and resentment. They often prefer color-blind approaches that treat all individuals equally regardless of race.

Conservative critics also express concern about CRT’s application in educational settings, arguing that it introduces inappropriate political content into classrooms and may cause students to feel guilt or shame based on their racial identity. Some argue that CRT-influenced curricula amount to indoctrination rather than education.

Additionally, some conservatives view CRT as fundamentally un-American, arguing that its critique of American institutions and emphasis on systemic oppression undermines national unity and patriotism. They contend that CRT presents an overly negative view of American history and society.

Some conservatives go further, calling CRT a form of “anti-American radicalism.” They believe it rejects Enlightenment values—reason, objectivity, and universal rights—in favor of ideology and emotion. Others criticize CRT’s reliance on narrative and lived experience, arguing that it substitutes storytelling for empirical evidence.

Liberal Perspectives

Supporters of CRT argue that it provides essential tools for understanding persistent racial inequalities that other approaches fail to explain adequately. They contend that CRT’s focus on systemic racism accurately describes how racial disparities continue despite formal legal equality.

To them, CRT isn’t about blaming individuals; it’s about recognizing how systems work. Advocates say that color-blind policies often perpetuate inequality because they ignore how race has historically shaped opportunity. They see CRT as empowering marginalized communities to tell their stories and as pushing America closer to its own ideals of justice and equality.

Liberal and progressive thinkers see CRT as a reality check—a necessary tool for understanding and dismantling systemic racism. They argue that laws and policies that seem neutral can still produce racially unequal outcomes—for example disparities in school funding or redlining in housing. (Denying loans or insurance based on neighborhoods rather than individual qualifications.)

From this perspective, CRT’s race-conscious approach is necessary because color-blind policies have proven insufficient to address entrenched racial inequities. Supporters argue that acknowledging and directly confronting racism is more effective than pretending race doesn’t matter.

Liberal defenders of CRT emphasize its scholarly rigor and empirical grounding, arguing that criticism often mischaracterizes or oversimplifies the theory. They point out that CRT is primarily an analytical framework used by scholars and graduate students, not a curriculum taught to elementary school children, as some critics suggest. Progressive educators also note that much of what critics call “CRT in schools” is really teaching about historical facts—slavery, segregation, civil-rights struggles—not law-school theory. They argue that banning CRT is less about protecting students and more about suppressing uncomfortable conversations about race and history.

Supporters also argue that CRT’s emphasis on storytelling and lived experience provides valuable perspectives that have been historically marginalized in academic discourse. They see this as democratizing knowledge production rather than abandoning scholarly standards.

Furthermore, many on the left argue that attacks on CRT represent attempts to silence discussions of racism and maintain the status quo. They view criticism of CRT as part of a broader backlash against racial justice efforts.

Why It Matters

You don’t have to buy every part of CRT to see why it struck a nerve. It forces us to ask uncomfortable but important questions: Why do some inequalities persist even after laws change? How do institutions carry the weight of history?

Whether you agree or disagree with CRT, it’s hard to deny that it has shaped how Americans talk about race. The theory challenges us to look beyond personal prejudice and ask how systems distribute power and privilege. Its critics, in turn, remind us that any theory of justice must preserve individual rights and shared civic values.

The real challenge may be learning to hold both ideas at once: that racism can be systemic, and that individuals should still be treated as individuals. CRT’s greatest value—and its greatest controversy—comes from forcing that tension into the open.

Sources:

JSTOR Daily. “What Is Critical Race Theory?” https://daily.jstor.org/what-is-critical-race-theory/ (Accessed December 3, 2025)

Harvard Law Review Blog. “Derrick Bell’s Interest Convergence and the Permanence of Racism: A Reflection on Resistance.” https://harvardlawreview.org/blog/2020/08/derrick-bells-interest-convergence-and-the-permanence-of-racism-a-reflection-on-resistance/ (March 24, 2023)

Bell, Derrick A., Jr. “Brown v. Board of Education and the Interest-Convergence Dilemma.” Harvard Law Review, Vol. 93, No. 3 (January 1980), pp. 518-533.

Columbia Law School. “Kimberlé Crenshaw on Intersectionality, More than Two Decades Later.” https://www.law.columbia.edu/news/archive/kimberle-crenshaw-intersectionality-more-two-decades-later

Crenshaw, Kimberlé. “Demarginalizing the Intersection of Race and Sex: A Black Feminist Critique of Antidiscrimination Doctrine, Feminist Theory and Antiracist Politics.” 1989.

Britannica. “Richard Delgado | American legal scholar.” https://www.britannica.com/biography/Richard-Delgado

Wikipedia. “Critical Race Theory.” https://en.wikipedia.org/wiki/Critical_race_theory (Updated December 31, 2025)

MTSU First Amendment Encyclopedia. “Critical Race Theory.” https://www.mtsu.edu/first-amendment/article/1254/critical-race-theory (July 10, 2024)

Delgado, Richard and Jean Stefancic. “Critical Race Theory: An Introduction.” New York University Press, 2001 (2nd edition 2012, 3rd edition 2018).

Teachers College Press. “Critical Race Theory in Education.” https://www.tcpress.com/critical-race-theory-in-education-9780807765838

American Bar Association. “A Lesson on Critical Race Theory.” https://www.americanbar.org/groups/crsj/publications/human_rights_magazine_home/civil-rights-reimagining-policing/a-lesson-on-critical-race-theory/

NAACP Legal Defense and Educational Fund. “What is Critical Race Theory, Anyway? | FAQs.” https://www.naacpldf.org/critical-race-theory-faq/ (May 6, 2025)

The illustration was generated by the author using Midjourney.