When most people think of the American Revolution, they picture Continental soldiers marching across snowy battlefields or patriot militias defending their homes. But there’s another group that played a crucial role in securing American independence: the Continental Marines. These amphibious warriors served in America’s nascent naval force and proved their worth on both land and sea during the eight-year struggle for independence.

The Continental Marines, established in 1775, served as America’s first organized marine force during the Revolutionary War before being disbanded in 1783, laying the foundation for what would eventually become the modern U.S. Marine Corps. Though short-lived, the original Marine Corps played a significant role in America’s fight for independence, setting precedents that the modern Marine Corps still honors today.

The Legislative Foundation

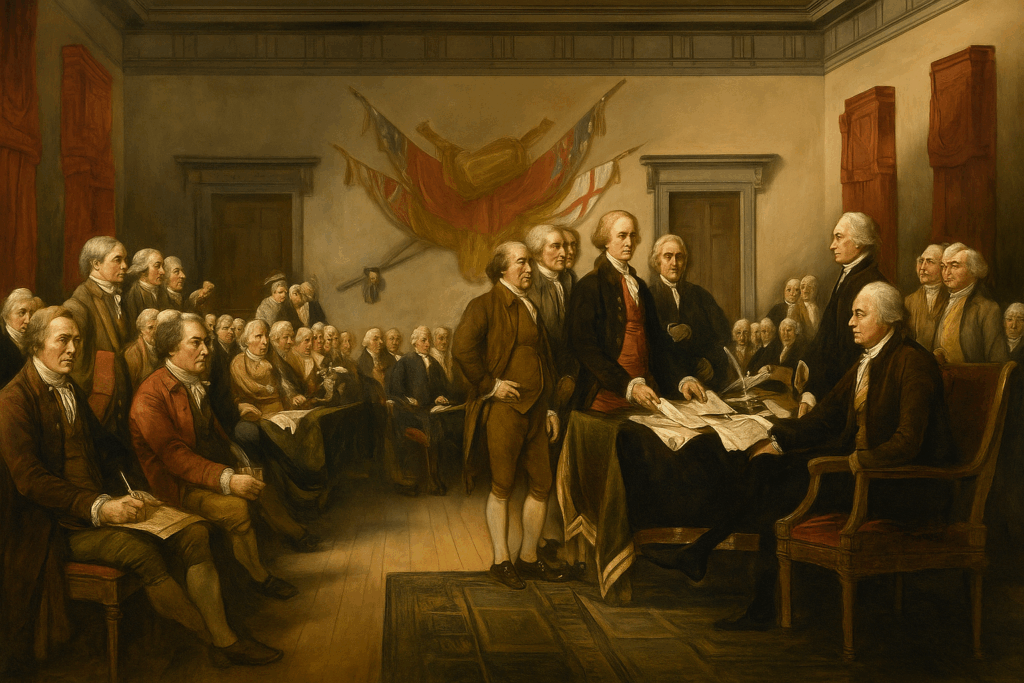

By the fall of 1775, the American colonies were no longer engaged in mere protest—they were in open rebellion against the British Empire. Battles had already been fought at Lexington, Concord, and Bunker Hill. The Continental Congress, led by figures like John Adams, had begun to organize a Continental Army under George Washington’s command. But many in the Congress, especially Adams, believed a navy was also essential to challenge British power at sea and disrupt its supply lines.

With a navy, it was reasoned, must come Marines—soldiers trained to serve aboard ships, conduct landings, enforce discipline, and fight in close quarters during boarding actions. This model was based on the British Royal Marines, a corps with a long and respected tradition.

The Continental Marines came into existence through a resolution passed by the Second Continental Congress on November 10, 1775. This date, which Marines still celebrate today as their birthday, marked a pivotal moment in American military history.

The Continental Marine Act of 1775 decreed: “That two battalions of Marines be raised consisting of one Colonel, two lieutenant-colonels, two majors and other officers, as usual in other regiments; that they consist of an equal number of privates as with other battalions, that particular care be taken that no persons be appointed to offices, or enlisted into said battalions, but such as are good seamen, or so acquainted with maritime affairs as to be able to serve for and during the present war with Great Britain and the Colonies.”

The legislation was part of Congress’s broader effort to create a Continental Navy capable of challenging British naval supremacy. The resolution was drafted by future U.S. president John Adams and adopted in Philadelphia. This wasn’t just about creating another military unit—Congress recognized that naval warfare required specialized troops who could fight effectively both on ships and on shore. The concept wasn’t entirely new—European navies had long employed marines for similar purposes—but the Continental Marines represented America’s first organized attempt to create a professional amphibious force, though the term amphibious didn’t come into use in a military setting until the 1930s—they would likely have been informally referred to as a naval landing force.

Recruitment: From Taverns to the Fleet

The recruitment of the Continental Marines has become the stuff of legend, particularly the story of their traditional birthplace at Tun Tavern in Philadelphia. Though legend places its first recruiting post at Tun Tavern, historian Edwin Simmons surmises that it may as likely have been the Conestoga Waggon [sic], a tavern owned by the Nicholas family. Regardless of which tavern served as the primary recruiting station, the Marines can claim the unique distinction of being the only military branch “born in a bar”.

The first Commandant of the Marine Corps was Captain Samuel Nicholas, and his first Captain and recruiter was Robert Mullan, the owner of Tun Tavern. Samuel Nicholas, a Quaker-born Philadelphia native and experience mariner, was commissioned on November 28, 1775, becoming the Continental Marines’ senior officer and only commandant throughout their existence. While his background as a Philadelphia tavern keeper may seem unusual for a military leader, his connections in the maritime community proved invaluable for recruiting. The requirement for maritime experience shaped the character of the force from its inception.

The Marines faced immediate recruitment challenges. Originally, Congress envisioned using the Marines for a planned invasion of Nova Scotia. They expected the Marines to draw personnel from George Washington’s Continental Army. However, Washington was reluctant to part with his soldiers, forcing the Marines to recruit independently, primarily from the maritime communities of Philadelphia and New York.

By December 1775, Nicholas had raised a battalion of approximately 300 men, organized into five companies, though this fell short of the original plan for two full battalions. Robert Mullan, helped to assemble the fledgling fighting force. Plans to form the second battalion were suspended indefinitely after several British regiments-of-foot and cavalry landed in Nova Scotia, making the planned naval assault impossible.

Organization for Dual Service

The Continental Marines were organized as a flexible force capable of serving both aboard ships and on land. For shipboard service, Marines were organized into small detachments that could be distributed across the Continental Navy’s vessels. Their organization reflected their multi-purpose mission: they served as security forces protecting ship officers, repelling boarders and joining boarding parties during naval engagements, and as assault troops for amphibious operations. Marksmanship received particular emphasis—a tradition that continues to this day—as Marines often served as sharpshooters in naval engagements, targeting enemy officers and sailors from the rigging and fighting tops of ships.

During the Revolutionary War, the Continental Marines uniform directives specified a green jacket with white facings and cuffs. However, when the first sets of uniforms were actually ordered and delivered, red facings were substituted for white. The likely reason was supply availability: red cloth was easier to obtain from Continental or captured British stores. The most authoritative description comes from Captain Samuel Nicholas, who wrote from Philadelphia in 1776 that Marines were outfitted in “green coats faced with red, and lined with white”

The uniform also included a high leather collar, or stock, to ostensibly protect the neck against sword slashes, although there is some evidence that may actually have been intended to improve posture. This distinctive uniform item helped establish their identity as an elite force and eventually lead to their treasured nickname “leathernecks”.

Shipboard Service and Naval Operations

The Continental Marines’ role aboard ship was multifaceted and crucial to naval operations. Their most important duty was to serve as onboard security forces, protecting the captain of a ship and his officers. During naval engagements, in addition to manning the cannons along with the crew of the ship, Marine sharp shooters were stationed in the fighting tops of a ship’s masts specifically to shoot the opponent’s officers and crew. These duties reflected centuries of naval tradition and drew on the example of the British Marines.

The Marines’ first major naval operation came in early 1776 when five companies joined Commodore Esek Hopkins’ Continental Navy squadron, on its first cruise in the Caribbean. This deployment demonstrated their value as both shipboard security and assault troops, setting the pattern for their service throughout the war.

Major Land-Based Actions

Despite their naval origins, the Continental Marines proved equally effective in land combat. Their most famous early action was the landing at Nassau on the Island of New Providence in the Bahamas in March 1776. The landing was the first by Marines on a hostile shore. It was led by Captain Nicholas and consisted of 250 marines and sailors. After 13 Days the Marines had captured two forts, the Government House, occupied Nassau and captured cannons and large stores of supplies. While they missed capturing the gunpowder stores (which had been evacuated before their arrival), the raid demonstrated American capability to strike British positions anywhere.

Though modest in scale, this operation had a major symbolic weight and established the Marines as America’s premier amphibious force. The operation did not decisively alter the balance of the war, but it foreshadowed the Marines’ enduring identity as a seafaring, expeditionary force. Today, the Battle of Nassau is remembered less for the supplies seized than for what it represented: the moment the Continental Marines stepped onto the world stage.

Other notable operations included raids on British soil itself. In April of 1778, Marines under the command of John Paul Jones made two daring raids, one at the port of Whitehaven, in northwest England, and the second later that day at St. Mary’s Isle. These operations brought the war directly to British territory, demonstrating American reach and resolve. While the battles had no strategic impact on the outcome of the war, they were a great moral booster when reports, though largely exaggerated, reached the rebellious colonies

Official Marine Corps history also acknowledges Marine participation in the Battle of Princeton, though it wasn’t a major Marine engagement. Marines from Captain William Shippen’s company, who had been serving aboard Continental Navy ships, participated in this battle as a part of Cadwalader’s Brigade on Washington’s flank. Some Marines were detached to augment the artillery, with a few eventually transferring to the army. However, the Marines’ role was relatively minor compared to their more significant naval actions during this period.

The Gradual Decline

As the Revolutionary War progressed, the Continental Marines faced increasing challenges. Financial constraints plagued the Continental forces throughout the war, and the Marines were no exception. The Continental Congress struggled to fund and supply all military branches, and the relatively small Marine force often found itself at a disadvantage competing for resources with the larger Continental Army and Navy.

Recruitment became increasingly difficult as the war dragged on. After the early campaigns, Nicholas’s four-company battalion discontinued independent service, and remaining Marines were reassigned to shipboard detachments. Their number had been reduced by transfers, desertion, and the loss of eighty Marines through disease.

The Continental Navy also faced severe challenges that directly impacted the Marines. Many ships were captured, destroyed, or sold, leaving Marines without their primary operational platform. As the naval war shifted toward privateering and smaller-scale operations, the need for organized Marine units diminished.

Beginning in February 1777 two companies of Marines either transferred to Morristown to assume the roles in the Continental artillery batteries or left the service altogether. This transfer of Marines to army artillery units reflected the practical reality that their specialized skills were needed elsewhere as the Continental forces adapted to changing circumstances.

Disbanded at War’s End

The end of the Revolutionary War marked the end of the Continental Marines as an organized force. Both the Continental Navy and Marines were disbanded in April 1783. Although a few individual Marines briefly stayed on to provide security for the remaining U.S. Navy vessels, the last Continental Marine was discharged in September 1783.

The last official act of the Continental Marines was escorting a stash of French Silver Crowns (coins) from Boston to Philadelphia—a loan from Louis XVI to establish of the Bank of North America. This final mission, conducted in 1781, symbolically linked the Marines to the new nation’s financial foundations even as their military role ended.

The disbanding reflected broader American attitudes toward standing military forces. Having won their independence, Americans were skeptical of maintaining large military establishments that might threaten republican government. The Continental Congress, facing financial pressures and political opposition to permanent military forces, chose to disband both the Navy and Marines.

Legacy

The Continental Marines’ contribution to American independence was significant despite their small numbers. In all, over the course of 7 years of battle, the Continental Marines had only 49 men killed and just 70 more wounded, out of a total force of roughly 130 Marine Officers and 2,000 enlisted. These relatively low casualty figures reflected both their effectiveness and the limited size of the force.

Rising tensions with Revolutionary France in the late 1790s led to the Quasi-War, prompting Congress to reestablish the Navy in 1798. On July 11 of that year, President John Adams signed legislation formally creating the United States Marine Corps as a permanent branch of the military, under the jurisdiction of the Department of the Navy. This new Marine Corps inherited the traditions, mission, and esprit de corps of its Revolutionary War predecessors. Despite the gap between the disbanding of the Continental Marines and the establishment of the new United States Marine Corps, Marines honor November 10, 1775, as the official founding date of their Corps.

The Continental Marines established precedents that would shape American military doctrine for more than two centuries. The Revolutionary War not only led to the founding of the United States (Continental) Marine Corps but also highlighted for the first time the versatility for which Marines have come to be known. They fought on land, they fought at sea on ships, and they performed amphibious assaults.

The Continental Marines represented a crucial innovation in American military organization. Born from congressional resolution and tavern recruitment, these maritime warriors proved their worth in battles from the Caribbean to the British Isles. Though disbanded with the war’s end, their legacy lives on in the traditions and spirit of the modern Marine Corps. While their numbers were small and their existence brief, their impact on American military tradition proved lasting and significant.

“America at 250: A Revolution Remembered… or Forgotten?”

By John Turley

On May 10, 2025

In Commentary, History

I’m old enough to remember the 200th anniversary of the American Revolution. Bicentennial symbols were everywhere. Liberty Bells, eagles, and the ubiquitous Bicentennial logo of the red, white and blue stylized five-point star. They could be found on hats, T-shirts, socks, soft drink cups, beer cans, and even a special “Spirit of ‘76” edition of the Ford Mustang II. Commemorative events and celebrations were being planned everywhere and people had “bicentennial fever”.

But the 250th anniversary is not attracting that same kind of attention or interest. I wonder why that is. Perhaps it’s that the name for a 250th anniversary, Semiquincentennial, doesn’t seem to roll off the tongue the way Bicentennial does. But I suspect it’s far more than just a tongue twisting name.

The Bicentennial came after a decade of national trauma. The Vietnam War, Watergate, and the civil rights struggles had all roiled the country. By 1976, most Americans wanted to feel good about the country again. It became a giant, colorful celebration of “American resilience.”

While the 250th anniversary of the American Revolution is being marked by numerous events, commemorations, and official proclamations, most are local, and it has not yet captured widespread public attention or generated the scale of national excitement seen during previous milestone anniversaries.

The anniversary arrives at a time of deep political polarization, which has complicated celebration plans. There is an ongoing debate within the group tasked with planning the celebration, the U.S. Semiquincentennial Commission, about how to present American history. Some members advocate for a traditional, celebratory approach focusing on the Founding Fathers and patriotic themes. Others push for a more inclusive narrative that acknowledges the complexities of American history, including the experiences of women, enslaved people, Indigenous communities, and other marginalized groups

Beyond the commission itself, some historians note that the “history wars”—ongoing disputes throughout society over how U.S. history should be taught and remembered—have made it harder to generate broad, enthusiastic buy-in for the anniversary among the general public.

Commemorations in places like Lexington and Concord have seen anti-Trump protesters carrying signs such as “Resist Like It’s 1775” and “No Kings,” explicitly drawing parallels between opposition to King George III and contemporary resistance to what they perceive as autocratic tendencies in current leadership. At the reenactment of Patrick Henry’s “Give Me Liberty or Give Me Death” speech, Virginia Governor Glenn Youngkin was met with boos and protest chants, highlighting how the Revolution’s legacy is being invoked in current political struggles.

While some organizers and historians hope the anniversary can serve as a unifying moment—emphasizing that “patriotism should not be a partisan issue”—the reality is that commemorations have often become forums for expressing contemporary political grievances and anxieties. The presence of both celebratory and dissenting voices at these events reflects the enduring debate over what it means to be American and who gets to define that identity. The complexity and messiness of American history, combined with current societal tensions, may dampen the celebratory mood and make it harder for people to connect emotionally with the anniversary.

Even the 250th logo has become a source of dispute, although it is one of the few areas of disagreement that is nonpartisan and tends to be about stylistic and artistic merits of the logo. Proponents of the new logo appreciate its modern and inclusive design emphasizing that the flowing ribbon represents “unity, cooperation, and harmony,” and reflects the nation’s aspirations as it commemorates this milestone. Detractors are concerned about the legibility of the “250” and the lack of traditional American symbols, such as stars, which could have reinforced its patriotic theme.

Surveys by history related organizations suggest that most Americans are not yet thinking about the 250th anniversary. The run-up to 2026 may see increased attention, but as of now, the anniversary has not broken through as a major topic of national conversation. If the anniversary continues to be viewed as a contentious partisan undertaking, it may never gain widespread popularity, and the general public may choose to stay away.

A friend who is a member of the West Virginia 250th committee told me that they had an initial meeting at which nothing was accomplished, and they have had no meeting since. It seems to me, this is up to us, the citizens, to ensure that the 250th anniversary of the American Revolution is appropriately remembered. We don’t have to live in an area where a Revolutionary War event occurred for us to recognize its events. Here in West Virginia, in October of 2024 we commemorated the 250th anniversary of the battle of Point Pleasant which many consider a precursor to the American Revolution. This event was not organized by any state or national group. It was the result of efforts on the part of the City of Point Pleasant and the West Virginia Sons of the American Revolution.

We do not need to depend on the government; we the people can hold local commemorations of revolutionary events that occurred in other areas. We can hold commemorations of the Battle of Bunker Hill, the signing of the Declaration of Independence, the Battle of Saratoga and many other events. It will take the initiative of local people to organize these events.

It will be our great shame if we allow this the commemoration of an event so significant in both American and world history to be turned into something that divides us rather unites us and strengthens our common bond.