When most people think of Parkinson’s disease, they picture the characteristic tremor—that involuntary shaking that has become almost synonymous with the condition. But the reality is far more complex than just one visible symptom. Let’s dig into what’s actually happening in the brain, how doctors figure out what’s going on, and what living with this condition really looks like.

What Causes Parkinson’s Disease?

Here’s where things get frustrating for researchers: despite decades of study, scientists still don’t know exactly what causes the nerve cells in the brain to die. I’m going to apologize in advance because I’m going to be using a lot of “doctor talk”—no way around it.

What we do know is that nerve cells (neurons) in the substantia nigra portion of the basal ganglia—an area of the brain controlling movement—become impaired or die, and these neurons normally produce dopamine, an important brain chemical. When these cells stop working properly, dopamine levels drop, and that’s when movement problems begin showing up.

But dopamine isn’t the whole story. People with Parkinson’s also lose nerve endings that produce norepinephrine, the main chemical messenger of the sympathetic nervous system, which helps explain why the disease affects so much more than just movement—things like blood pressure, digestion, and energy levels all take a hit.

Most Parkinson’s cases are idiopathic, meaning the cause is unknown, though contributing factors have been identified. Current thinking suggests a complicated mix of genetic and environmental factors. About 5% to 10% of cases begin before age 50, and these early-onset forms are often, though not always, inherited.

Some risk factors have emerged from research: age is the most significant, with about 1% of those over 65 and around 4.3% of those over 85 affected. Traumatic brain injury significantly increases risk, especially if recent, and repeated head injuries from contact sports can cause what’s called post-traumatic parkinsonism. Muhammad Ali is a classic example of this.

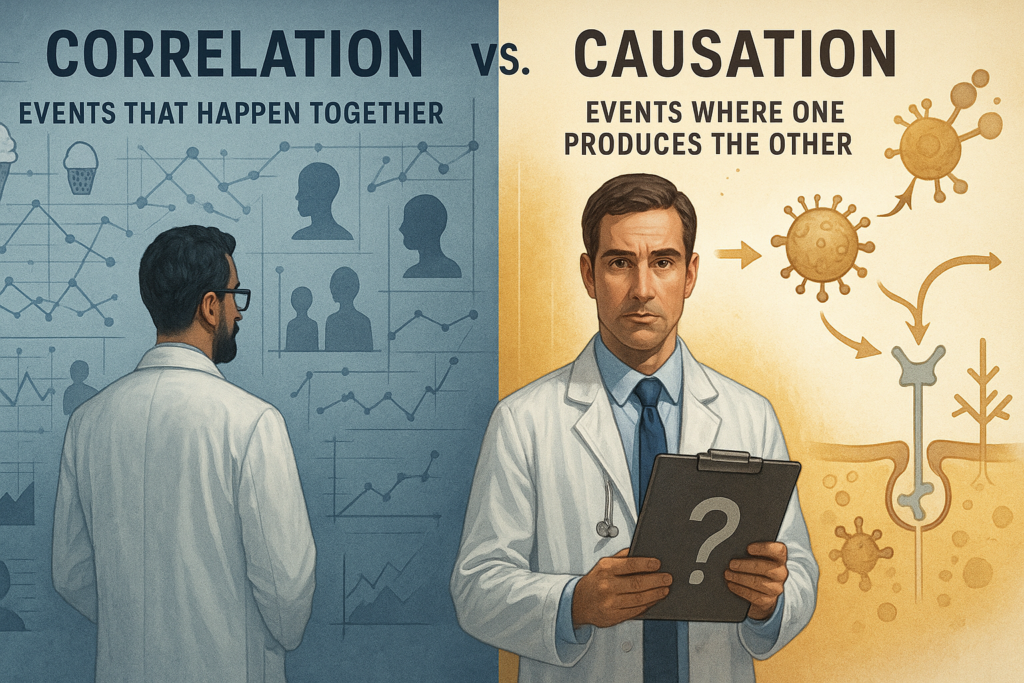

Exposure to pesticides and industrial chemicals has also been identified as a risk factor. Interestingly, large epidemiologic studies consistently show that people who smoke have a lower risk of being diagnosed with Parkinson’s disease than never‑smokers, although smoking is still strongly discouraged because of its many harmful health risks. Large cohort studies in the U.S. and Europe generally find no direct association between alcohol consumption and Parkinson’s disease. A few observational studies show that moderate drinkers have slightly lower Parkinson’s rates. However, researchers believe this may be due to reverse causation (people in early or undiagnosed stages often reduce drinking because of GI or mood changes) and lifestyle confounders (moderate drinkers may differ in socioeconomic status, diet, or activity level). So, the “protective” effect is considered speculative, not causal.

The Symptoms: More Than Just Shaking

The hallmark movement symptoms—what doctors call “motor symptoms”—are what usually bring people to the doctor. Slowed movements, called bradykinesia, is required for a Parkinson’s diagnosis. People describe it as muscle weakness, though it’s really about control, not strength. The classic tremor, stiffness, and balance problems round out the main movement issues. Patients frequently show reduced arm swing, shuffling gait, difficulty initiating movement or turning, masked facial expression, decreased blinking, and soft or monotone speech.

But here’s what often surprises people: many individuals later diagnosed with Parkinson’s notice that prior to experiencing stiffness and tremor, they had sleep problems, constipation, loss of smell, and restless legs. These “prodromal symptoms” can show up years before the movement problems become obvious. Other early signs include mood disorders like anxiety and depression.

The cognitive side deserves attention too. Some people experience changes in cognitive function, including problems with memory, attention, and the ability to plan and accomplish tasks, though hard to pin down due to concurrence with age related memory problems, 20% at the time of diagnosis is a commonly cited number. More contested is how many develop Parkinson’s dementia, with estimates ranging from 20% all the way to 85%.

How Doctors Make the Diagnosis

Here’s something that might surprise you: there are currently no blood or laboratory tests to diagnose non-genetic cases of Parkinson’s. The standard diagnosis is clinical, meaning there’s no test that can give a conclusive result—certain physical symptoms need to be present.

Doctors typically diagnose Parkinson’s by taking a detailed medical history and performing a neurological examination. If symptoms improve after starting medication, that’s another indicator that the person has Parkinson’s.

There are some imaging tools available. The FDA approved an imaging scan called the DaTscan in 2011, which allows doctors to see detailed pictures of the brain’s dopamine system using a radioactive drug and SPECT scanner. But this scan can’t definitively diagnose Parkinson’s though it helps rule out conditions that mimic it. A hallmark of Parkinson’s is the buildup of misfolded alpha-synuclein proteins (Lewy bodies) inside neurons. Whether this is a cause, an effect, or both is still under study—this part of the science remains somewhat speculative.

Recently, researchers developed something more promising: the alpha-synuclein seeding amplification assay can detect abnormal alpha-synuclein in spinal fluid and may detect Parkinson’s in people who haven’t been diagnosed yet. The catch? It requires a spinal tap and isn’t widely available, though scientists are working on blood and saliva tests.

The early diagnostic challenge is real. Many disorders can cause similar symptoms, and people with Parkinson’s-like symptoms from other causes are sometimes said to have parkinsonism, which includes conditions like multiple system atrophy and Lewy body dementia that require different treatments.

What to Expect: The Prognosis

Let’s address the big question: how does Parkinson’s affect life expectancy? The news here is better than you might think. The average life expectancy of a person with Parkinson’s is generally the same as for someone without the disease.

More specifically, average life expectancy has increased by about 55% since 1967, rising to more than 14.5 years from diagnosis. Modern treatments have made a huge difference. Research indicates that those with Parkinson’s and normal cognitive function appear to have a largely normal life expectancy.

That said, timing matters. Research from 2020 suggests that people who receive a diagnosis before age 70 usually experience a greater reduction in life expectancy, and males with Parkinson’s may have a greater reduction in life expectancy than females.

The disease is progressive, meaning it gets worse over time, but symptoms and progression vary from person to person, and neither you nor your doctor can predict which symptoms you’ll get, when, or how severe they’ll be. The tremor-dominant type usually has a more favorable prognosis than the hypokinetic type.

What actually causes death in advanced Parkinson’s? Advanced symptoms can cause falls, pressure ulcers, swallowing difficulties and general frailty, all of which are linked to death. Aspiration pneumonia—when you inhale food or liquid into the lungs—is the leading cause of death for people with Parkinson’s.

Managing the Disease

Currently, there’s no cure for Parkinson’s, but medications or surgery can improve many of the movement symptoms.

The gold standard medication is levodopa (often combined with carbidopa as Sinemet). Healthcare providers use levodopa cautiously and they commonly combine it with other medications to keep your body from processing it before it enters your brain. This helps avoid side effects like nausea, vomiting, and low blood pressure when standing up. The tricky part? Over time, the way your body uses levodopa changes, and it can lose effectiveness.

Beyond levodopa, doctors use MAO-B inhibitors and dopamine agonists. As the disease progresses, these medications become less effective and may cause involuntary muscle movements. When drugs stop working well, there are surgical options to treat severe motor symptoms.

The main surgical treatment today is called deep brain stimulation (DBS). It is the most important therapeutic advancement since the development of levodopa, and it’s been FDA-approved since the late 1990s A surgeon places thin metal wires called electrodes into one or both sides of the brain, in specific areas that control movement. A second procedure implants an impulse generator battery under the collarbone or in the abdomen. It is similar to a heart pacemaker and about the size of a stopwatch, this device delivers electrical stimulation to those targeted brain areas.

A new treatment that is being used is focused ultrasound. Guided by MRI, high-intensity, inaudible sound waves are emitted into the brain, and where these waves cross, they create high energy that destroys a very specific area connected to tremor. It’s considered non-invasive and the FDA has approved it for Parkinson’s tremor that doesn’t respond to medications.

Don’t underestimate lifestyle interventions either. Physical therapy can improve balance and address muscle stiffness, and regular exercise improves strength, flexibility, and balance. Eating a balanced diet helps—drinking plenty of water and eating enough fiber reduces constipation, while omega-3 fats and magnesium may boost cognition and help with anxiety.

Parkinson’s disease sits at the intersection of aging, genetics, environment, and biology. Diagnosis is clinical, progression is gradual and variable, and treatment has become increasingly sophisticated. While it remains incurable, early diagnosis, personalized medication plans, targeted therapies like DBS, and consistent exercise allow many people to maintain meaningful independence for years.

The key message from specialists? Treatment makes a major difference in keeping symptoms from having worse effects, and adjustments to medications and dosages can hugely impact how Parkinson’s affects your life.

The Enigma of Magical Thinking: From Everyday Enchantment to Political Discourse

By John Turley

On December 10, 2025

In Commentary

Have you ever been talking to someone when you started to think, “How in the world can they believe that?” They may have been engaging in magical thinking. But don’t feel too superior because most likely you have been guilty of the same thing.

Magical thinking is one of those fascinating quirks of human psychology that shows up everywhere—from your friend who won’t talk about their job interview until it’s over, to major political movements that shape our world. At its core, it’s the belief that our thoughts, words, or actions can influence events in ways that completely ignore standard cause-and-effect logic.

What We’re Really Talking About

Magical thinking isn’t new. Our ancestors practiced animism, believing spirits lived in everything around them. They created rituals to appease these spirits or tap into their power. Fast forward to today, and despite all our scientific advances, these patterns of thinking haven’t gone anywhere—they’ve just evolved.

It’s essentially a cognitive bias where we connect events that aren’t truly linked. This typically happens when we’re facing uncertainty, stress, or situations where we feel powerless. The thinking pattern gives us a psychological safety net—a feeling that we’re in control.

How It Shows Up in Daily Life

Superstitions and Rituals

Knocking on wood to prevent bad luck is a universal example. There’s zero logical connection between rapping your knuckles on a wooden surface and your future, but people do it anyway because it feels like taking action against uncertainty.

Athletes are notorious for this. That “lucky” jersey, the pre-game meal eaten in exactly the same order, the specific warm-up routine—these rituals don’t really affect performance, but they can boost confidence and calm nerves, which indirectly helps.

Lucky Charms and Talismans

Rabbit’s feet, four-leaf clovers, special coins—lots of people carry objects they believe bring good fortune. These beliefs come from cultural traditions and personal experiences. While there’s no scientific backing for their power, the comfort they provide is genuinely real.

The Jinx Effect

Ever avoided talking about something good that might happen because you didn’t want to “jinx” it? I worked in emergency rooms for many years, and no one would ever use the word “quiet” for fear that that would cause a sudden rush of ambulances. That’s magical thinking connecting your words to external outcomes in a totally irrational way.

Health Decisions

This gets more serious when magical thinking influences medical choices. Some people strongly believe in homeopathic remedies or alternative therapies that lack scientific validation. Interestingly, the placebo effect demonstrates how powerful belief can be—people sometimes experience limited health improvements simply because they believe a treatment works, although these effects are most common in relief of mild to moderate pain.

Gambling Behaviors

Casinos thrive on magical thinking. Blowing on dice, wearing lucky clothes, or believing you’re “due” for a win after several losses—these are all examples of the illusion of control. Gamblers think they can influence random outcomes through specific actions, which can fuel persistent gambling even when they’re losing money.

When Magical Thinking Enters Politics

Here’s where things get more complex and consequential. Magical thinking doesn’t just affect personal decisions—it shapes entire political movements and policy debates.

Conspiracy Theories

QAnon represents one of the most striking modern examples. Followers believe a secret group of powerful figures runs a global operation, and that certain political leaders possess almost supernatural abilities to fight against it. Despite zero credible evidence, this belief system has attracted significant followings, demonstrating how magical thinking can create entire alternate realities in the political sphere.

Pandemic Responses

COVID-19 brought magical thinking into sharp relief. Some people blamed 5G technology for causing the virus, leading to actual attacks on cell towers in multiple countries. Others promoted unproven treatments as miracle cures, despite scientific evidence showing they didn’t work and in some cases were harmful. The desire for simple answers to a complicated crisis made people vulnerable to these beliefs.

Vaccine denial—another pandemic related example of magical thinking—is attributing harmful effects to vaccines despite extensive scientific evidence to the contrary, while simultaneously believing that alternative approaches (like “natural immunity” alone) possess special protective powers.

Economic Policies

“Trickle-down economics”—the idea that tax cuts for wealthy individuals automatically generate economic growth and increased government revenue—often functions as magical thinking in policy debates. This theory simplifies incredibly complex economic dynamics and, according to multiple economic analyses, lacks consistent empirical support. Critics point out it ignores the nuances of fiscal policy and income distribution.

Climate Change

Despite overwhelming scientific consensus, some political movements deny climate change reality. This sometimes involves believing that natural cycles alone explain everything, or that technological solutions will magically appear without significant policy intervention. This type of thinking often protects existing economic interests or ideological positions.

Why Our Brains Do This

Pattern Recognition

Humans are pattern-seeking machines. We’re wired to spot connections, even when they don’t exist—a tendency called apophenia. This helped our ancestors survive—better to assume that rustling bush is a tiger than to ignore it—but it also leads us to form superstitions and magical beliefs.

The Comfort Factor

When life feels uncertain or stressful, magical thinking offers psychological comfort. Rituals and lucky charms reduce anxiety and give us a sense of agency, even if that control is illusory.

Cultural Transmission

Many superstitions and magical beliefs get passed down through generations, becoming woven into cultural norms. When we see others engaging in these behaviors, it reinforces them. Social acceptance is powerful.

Political Advantages

In politics specifically, magical thinking persists because it:

Finding the Balance

Here’s the thing: magical thinking isn’t purely negative. It serves real psychological functions—helping us cope with uncertainty, reducing anxiety, and giving us a sense of control when the world feels chaotic.

The problem comes when we rely on it too heavily. Avoiding medical treatment for unproven remedies can have serious health consequences. Basing policy decisions on magical thinking rather than evidence can affect millions of people. The key is balance.

The Takeaway

Magical thinking connects us to our shared human history, from ancient animistic beliefs to modern political movements. It reveals how our minds constantly work to make sense of an unpredictable world. By understanding these cognitive patterns, we can appreciate their psychological benefits while staying alert to their limitations.

Whether we’re knocking on wood or evaluating political claims, recognizing magical thinking helps us become more critical consumers of information. We can honor the comfort these beliefs provide while still grounding important decisions in evidence and rational analysis.

The enchantment isn’t going anywhere—it’s part of being human. But awareness of it? That’s our best tool for navigating between the rational and the magical in both our personal lives and our shared political reality.