The Evolution of the Marine Corps Hymn

The opening line of the Marine Corps Hymn, “From the halls of Montezuma to the shores of Tripoli,” stands as one of the most recognizable phrases in American military tradition. But what are The Halls of Montezuma? Where are the Shores of Tripoli? Why are they important to Marines?

Few realize that this iconic song has undergone subtle but significant changes throughout its history, reflecting the Marine Corps’ evolution from a small naval force into a modern, multi-domain fighting organization.

The Original Battles

The hymn’s opening line commemorates two pivotal early battles that established the Marine Corps’ reputation for courage and effectiveness. “The Halls of Montezuma” refers to the Battle of Chapultepec during the Mexican American War in September 1847. Chapultepec Castle, perched on a hill overlooking Mexico City, was built on the site where Aztec Emperor Montezuma II once maintained his palaces and gardens. The fortress housed the Mexican military academy and served as a key defensive position protecting the capital. The term “Montezuma” evokes the grandeur of ancient Mexico, even though Montezuma himself had no connection to the castle. It was a bit of poetic license—common in martial songs—to evoke the exotic location and historic weight of the conquest.

During the assault on Chapultepec, Marines fought alongside Army units in a fierce battle against heavily fortified positions. The Marines’ performance in this engagement helped secure American victory and opened the path to Mexico City, effectively ending the war. This battle demonstrated that Marines could excel not just in naval operations but also in major land campaigns.

The “blood stripe”—the red stripe on Marine dress blue trousers—is traditionally said to honor the Marines who fell at Chapultepec, although this is more legend than documented fact.

The second half of the line, “to the shores of Tripoli,” reaches back even further to the First Barbary War (1801-1805). In this conflict, a small force of Marines participated in the capture of Derna, a fortified city on the Libyan coast. Led by Lieutenant Presley O’Bannon, the Marines marched across the desert with a motley force of mercenaries and Arab allies to attack the Barbary pirates’ stronghold. The success at Derna marked the first time the American flag was raised over a fortress in the Old World and established the Marines’ reputation for discipline, effectiveness, and fighting in exotic, far-flung locations.

Marine Corps officers still carry a Mameluke Sword based on the sword presented to Lt. O’Bannon by Ottoman Viceroy Prince Hamet in recognition of his valor.

The Hymn’s Origins

The Marine Corps Hymn emerged sometime in the 1840s or 1850s, shortly after the Mexican-American War. It was not officially adopted until 1929 when Commandant of the Marine Corps, Major General John A. Lejeune issued an order making it the official song of the Corps. Several variations of the lyrics were in use prior to that, and the words were standardized in the adoption order.

Unlike many military songs that were composed by established musicians, the hymn’s authorship remains uncertain. The melody was borrowed from a comic opera by Jacques Offenbach, but the words appear to have been written by Marines themselves, possibly at the Marine Barracks in Washington, D.C.

The original version celebrated these early victories with straightforward language: “From the halls of Montezuma to the shores of Tripoli, we fight our country’s battles on the land as on the sea.” This phrasing reflected the Marine Corps’ dual nature as both a naval force and an expeditionary force capable of fighting anywhere American interests were threatened.

The Aviation Revolution

For nearly a century, the hymn remained largely unchanged. However, as the Marine Corps expanded its capabilities during the early 20th century, the traditional wording began to seem incomplete. The establishment of a Marine Aviation Company in 1915 and its expansion during World War I marked a significant evolution of the Corps’ mission and capabilities.

By World War II, Marine aviation had become a crucial component of the Corps’ fighting power. Marine pilots flew close air support missions, fought in aerial combat, and provided reconnaissance for ground forces. The Pacific theater, where Marines conducted their most famous campaigns, showcased the integration of air, land, and sea operations in ways that the original hymn could not capture.

The Historic Change

Recognition of this evolution came on November 21, 1942, when Commandant of the Marine Corps authorized an official change to the hymn’s first verse. The modification was originally proposed by Gunnery Sergeant H.L. Tallman, who recognized that the traditional phrasing no longer adequately described the Marines’ expanding role.

The fourth line of the first verse was changed from “on the land as on the sea” to “in the air, on land and sea.” This seemingly small addition carried profound significance. It acknowledged that Marines now operated in three environments rather than two, reflecting the Corps’ transformation into a modern, combined-arms force.

The timing of this change was crucial. Coming just as the United States was fully engaged in World War II, the revision recognized the vital role Marine aviation was playing in Pacific operations. From the skies over Guadalcanal to the beaches of Iwo Jima, Marine pilots were proving that air power was no longer a supporting element but an integral part of Marine Corps operations.

Legacy and Meaning

The evolution of the Marine Corps Hymn’s opening stanza reflects a broader story about military adaptation and institutional identity. The original battles at Montezuma and Tripoli established the Marines’ reputation for fighting in distant, challenging environments. The addition of “air” recognized that this tradition continued but now extended into new realms of warfare.

Today, when Marines sing “From the halls of Montezuma to the shores of Tripoli,” they honor not just those early victories but the entire span of Corps history. The hymn connects modern Marines with their predecessors while acknowledging how the institution has grown and changed. The simple addition of one word in 1942 ensured that the Marine Corps Hymn would remain relevant for generations of Marines who would fight not just on land and sea, but in the air as well: preserving the past while embracing the future.

The U.S. Public Health Service: Guardians of America’s Health

By John Turley

On July 3, 2025

In Commentary, History, Medicine

The United States Public Health Service (USPHS) has quietly served as the backbone of the nation’s public health infrastructure for over two centuries. From its beginnings as a maritime medical service to its current role as a comprehensive public health organization, the USPHS has evolved to meet the changing medical challenges facing Americans and to protect and promote the health of the nation.

Origins and Early History

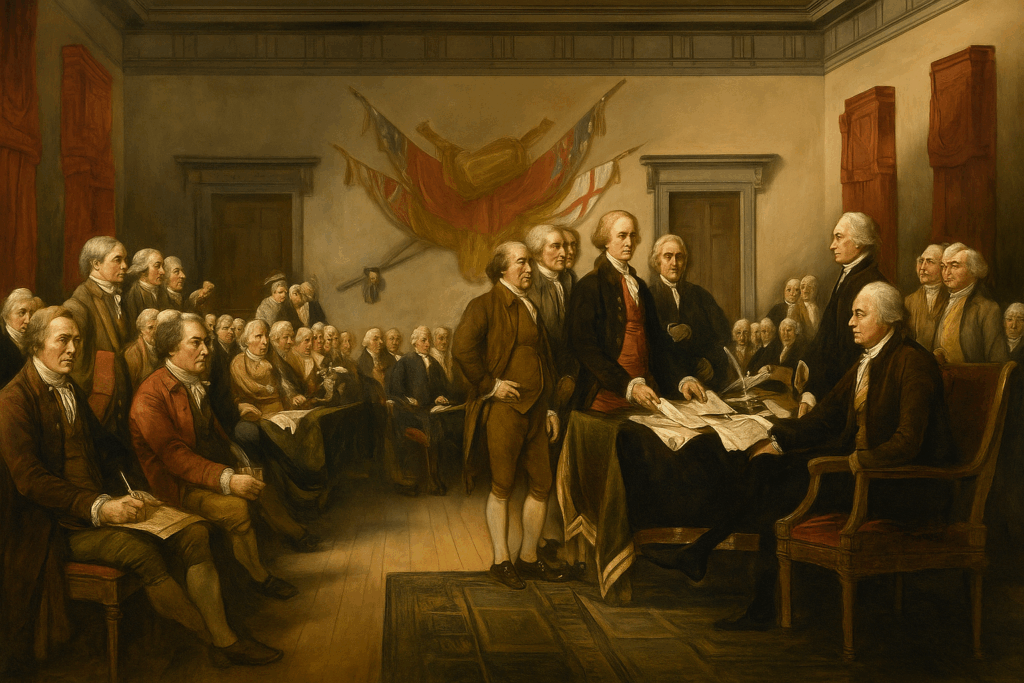

The U.S. Public Health Service traces back to 1798, when President John Adams signed “An Act for the Relief of Sick and Disabled Seamen.” This legislation established the Marine Hospital Service and created a network of hospitals to care for the merchant sailors who served America’s growing maritime commerce. The act represented one of the first examples of federally mandated health insurance, as ship owners were required to pay 20 cents per month per sailor to fund medical care.

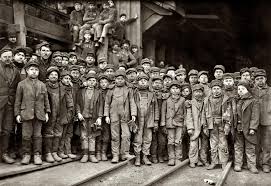

The Marine Hospital Service initially operated a series of hospitals in major port cities including Boston, New York, Philadelphia, and Charleston. These facilities served not only sick and injured sailors but also played a crucial role in preventing the spread of infectious diseases that could arrive on ships from foreign ports. This dual function of treatment and prevention would become a defining characteristic of the USPHS mission.

The transformation from the Marine Hospital Service to the modern Public Health Service began in the late 19th century. In 1889, the organization was restructured and placed under the supervision of Dr. John Maynard Woodworth as Supervising Surgeon—later Surgeon General—marking the beginning of its evolution into a more comprehensive public health agency. The name was officially changed to the Public Health and Marine Hospital Service in 1902, and finally to the U.S. Public Health Service in 1912, reflecting its expanded mandate beyond maritime health.

Evolution and Expansion

The early 20th century brought significant expansion to the USPHS mission. The 1906 Pure Food and Drug Act gave the service regulatory responsibilities, leading to the creation of what would eventually become the Food and Drug Administration. During World War I, the USPHS took on additional responsibilities for military health and epidemic control, establishing its role as a rapid response organization for national health emergencies.

The Great Depression and World War II further expanded the service’s scope. The Social Security Act of 1935 created new public health programs administered by the USPHS, while wartime demands led to increased focus on occupational health, environmental health hazards, and the health needs of defense workers. The post-war period saw the establishment of the National Institutes of Health—originally called the Laboratory of Hygiene—as part of the USPHS, cementing its role in medical research.

Major Functions and Modern Roles

Today’s U.S. Public Health Service operates as part of the Department of Health and Human Services and supports major agencies and functions. The service’s mission centers on protecting, promoting, and advancing the health and safety of the American people through several key areas.

Disease Prevention and Health Promotion are the core of USPHS activities. It works with the Centers for Disease Control and Prevention (CDC), to lead national efforts in the prevention and control of infectious and chronic diseases. From tracking disease outbreaks to promoting vaccination programs, the USPHS a part of America’s first line of defense against health threats.

Regulatory and Safety Functions represent other crucial areas. The USPHS coordinates with the Food and Drug Administration (FDA) to ensure the safety and efficacy of medications, medical devices, and food products. It works with the Agency for Toxic Substances and Disease Registry monitoring environmental health hazards. Other USPHS components are involved in regulating everything from clinical laboratories to health insurance portability.

Emergency Response and Preparedness has become increasingly important in recent decades. The USPHS maintains rapid response capabilities for natural disasters, disease outbreaks, and public health emergencies. This includes the deployment of Commissioned Corps officers to disaster zones and the maintenance of strategic national stockpiles of medical supplies.

Health Services for Underserved Populations continues the service’s historic mission of providing care where it’s most needed. The Health Resources and Services Administration oversees community health centers, rural health programs, and initiatives to address health disparities among vulnerable populations. The Indian Health Service is an important part of the USPHS, providing healthcare to often isolated communities.

The Commissioned Corps

One of the most distinctive features of the USPHS is its Commissioned Corps, a uniformed service of over 6,000 public health professionals. Established in 1889, the Corps operates as one of the eight uniformed services of the United States, alongside the armed forces, NOAA Corps, and Coast Guard. Officers hold military-style ranks and wear uniforms, but their mission focuses entirely on public health rather than defense.

The Commissioned Corps provides a ready reserve of highly trained health professionals who can be rapidly deployed to address public health emergencies. From hurricane and disaster relief to pandemic assessment and treatment, Corps officers have served on the front lines of America’s health challenges, providing everything from direct patient care to epidemiological investigation and public health program management.

Contemporary Challenges and Future Directions

The U.S. Public Health Service continues to evolve in response to emerging health challenges. Climate change, antimicrobial resistance, mental health crises, and health equity concerns represent current priorities. The COVID-19 pandemic demonstrated both the critical importance of robust public health infrastructure and the challenges of maintaining public trust in health authorities.

As America faces an increasingly complex health landscape, the USPHS mission of protecting and promoting the nation’s health remains as relevant as ever. From its origins serving sailors in port cities to its current role addressing global health threats, the U.S. Public Health Service continues its quiet but essential work of safeguarding American health, adapting its methods while maintaining its core commitment to serving the public good.

The service’s history shows that effective public health requires not just scientific expertise, but also the institutional ability to respond rapidly to emerging threats, the authority to implement necessary interventions, and the public trust to lead national health initiatives. As new challenges appear, the USPHS continues to build on its more than two-century legacy of service to the American people.