I like to think of my research and my writing as being eclectic, although sometimes I have to admit they may be better described as unfocused. This post may be an example of one of those episodes. Recently I was looking through a magazine and saw an ad with an illustration that was obviously based on Monet’s Garden in Giverny. It sent me thinking about a series of lectures I had watched on French Impressionists and that got me thinking about Frédéric Bazille, an artist I have always found fascinating. I decided to spend a little time looking into him. So, I completely forgot about the project I was working on and started on this one. That may be why I have so many unfinished articles and random files of unrelated research.

In French Impressionism there are names that stand in the forefront— Monet, Renoir, Degas, Manet — and then there are names that hover just behind them. Frédéric Bazille is one of those in the shadows. He was part of the same circle, painted with the same daring brush, and showed the same fascination with light and color. Yet his life ended before Impressionism even had a name. Bazille was killed in the Franco-Prussian War in 1870, at just twenty-eight years old. His death robbed the movement of both a gifted painter and a generous friend who helped shape its history.

A Wealthy Outsider

Bazille was born in Montpellier in 1841, the son of a prosperous Protestant family. Unlike Monet and Renoir, who often lived in dire poverty, Bazille never worried about how to pay for paints or rent. That freedom made him unusual in Parisian art circles.

Bazille became interested in painting after seeing works by Eugène Delacroix, but his family insisted he also study medicine to ensure financial independence. By 1859, he had begun taking drawing and painting classes at the Musée Fabre in Montpellier with local sculptors Joseph and Auguste Baussa.

In 1862, Bazille moved to Paris ostensibly to continue his medical studies, but he also enrolled as a painting student in Charles Gleyre’s studio. There he met three fellow students who would become close friends and collaborators: Claude Monet, Pierre-Auguste Renoir, and Alfred Sisley. He soon became part of a group of artists and writers that also included Édouard Manet, and Émile Zola. After failing his medical exam (perhaps intentionally) in 1864, Bazille began painting full-time.

Bazille used his money to help everyone in his circle. He rented large studios in Paris, where friends who couldn’t afford space of their own painted and slept. He bought their finished canvases when no one else would. To Manet, Renoir, Monet, and Sisley, Bazille was not just a colleague but a lifeline. Without him, some of the paintings we now consider cornerstones of Impressionism might never have been finished.

Experiments With Light

What makes Bazille more than a wealthy patron is his own work as an artist. He was fascinated by how sunlight transformed color and how outdoor settings could frame the human figure. Long before the Impressionists formally broke with mainstream French art, Bazille was exploring these themes. In The Pink Dress (1864), he painted his cousin on a terrace overlooking the countryside, her figure half-lost in shadow, half-caught by light. In Family Reunion (1867), he executed a technically difficult group portrait outside, with natural sunlight revealing the folds of dresses and the textures of grass. In The Studio on the Rue de la Condamine (1870), Bazille turned his brush on his own circle, capturing Manet, Monet, Renoir, and Zola in a collective portrait of the avant-garde.

His style was less free than Monet’s and more deliberate than Renoir’s, but the suggestion of Impressionism is unmistakable. He was bridging the academic precision of his training with the looser brushwork of the new school.

Bazille exhibited at the Salon in Paris in 1866 and 1868. The Salon was the most prestigious and conservative art exhibition in France. These official exhibitions became increasingly controversial as they repeatedly rejected innovative artists like the Impressionists, leading to the creation of alternative exhibitions such as the famous Salon des Refusés in 1863 and independent Impressionist exhibitions starting in 1874.

A Call to War

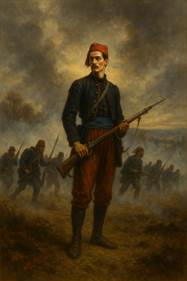

When France declared war on Prussia in 1870, Bazille enlisted in the army. He could easily have avoided service — his family connections, his money, and his medical background all gave him options. But he joined the Zouave, an elite infantry regiment known for its colorful uniforms and reckless bravery.

On November 28, 1870, at the Battle of Beaune-la-Rolande, Bazille’s commanding officer was struck down. Bazille stepped forward to lead the attack. Within minutes, he too was hit and killed. He never saw the armistice. He never saw the first Impressionist exhibition in 1874. He never saw his friends vindicated by history.

The Spirit of Impressionism

Bazille left fewer than sixty known canvases. That small number alone ensures his reputation will never match Monet’s or Renoir’s. Yet the works he did leave offer glimpses of a painter who might have been one of the movement’s greats. He had both vision and means — a rare combination in the avant-garde world.

Without him, the Impressionists lost not only a friend but also a stabilizing force. Bazille’s studios had been safe havens, his purchases financial lifelines, his company a source of encouragement. Monet and Renoir were devastated by his death, and for years afterward, they spoke of him with unslacking grief.

Art historians often speculate about what role he might have played had he lived. Perhaps he would have anchored Impressionism more firmly in the Paris art establishment, or perhaps his money and position would have shielded his friends from years of ridicule. We can only guess.

Remembering Bazille

Today, Bazille’s paintings hang in the Musée d’Orsay in Paris and the Musée Fabre in Montpellier and in the United States at The National Gallery of Art, the Metropolitan Museum of Art, and the Art Institute of Chicago. To see them is to feel both promise and loss. His canvases are alive with sunlight and color, and they hint at the career that never was.

Bazille reminds us that history is shaped not just by the titans who endure but also by the voices cut short. Impressionism survived and flourished without him, but it was poorer for his absence. In a way, every bright patch of sunlight in Monet’s gardens or flashing dress in Renoir’s dance halls carries a trace of the young man who painted light before the movement even had a name — and who never lived to see it shine.

Bread and Circuses: From Ancient Rome to Modern America

By John Turley

On September 16, 2025

In Commentary, History, Politics

“Already long ago, from when we sold our vote to no man, the People have abdicated our duties; for the People who once upon a time handed out military command, high civil office, legions — everything, now restrains itself and anxiously desires for just two things: bread and circuses.”

Nearly 2,000 years ago, Roman satirist Juvenal penned one of history’s most enduring political observations: “Two things only the people anxiously desire — bread and circuses.” Writing around 100 CE in his Satire X, Juvenal wasn’t celebrating this phenomenon—he was lamenting it. The poet watched as Roman citizens traded their political engagement for free grain and spectacular entertainment, becoming passive spectators rather than active participants in their democracy. The phrase has endured for nearly two millennia as shorthand for a troubling political dynamic: entertainment and consumption replacing civic engagement and accountability.

The Roman Warning

Juvenal’s critique came at a pivotal moment in Roman history. The republic had collapsed, and emperors like Augustus had systematically dismantled democratic institutions. Rather than revolt, Roman citizens seemed content as long as the government provided basic sustenance (the grain dole called annona) and elaborate spectacles at venues like the Colosseum. Political participation withered as people focused on immediate pleasures rather than long-term civic responsibilities.

The strategy worked brilliantly for Roman rulers. Keep the masses fed and entertained, and they won’t question your authority or demand meaningful representation. It was political control through distraction—a form of soft authoritarianism that maintained order without overt oppression. The policy was effective in the short term—peace in the streets and loyalty to the emperors—but disastrous over time. Rome’s population became disengaged from politics, while real power consolidated in the hands of a few.

Modern American Parallels

Fast-forward to contemporary America, and Juvenal’s observation feels uncomfortably relevant. While we don’t have gladiatorial games, we do have our own version of “circuses”—professional sports, reality TV, social media feeds, and celebrity culture that dominate public attention. These aren’t inherently problematic, but they become concerning when they crowd out civic engagement.

Our modern “bread” takes various forms: government assistance programs, subsidies, and economic policies designed to maintain consumer spending. We are saturated with cheap goods, instant delivery services, and mass consumerism. For many, economic struggles are temporarily softened by accessible consumption, from fast food to online shopping. Yet material comfort often masks deeper inequalities and systemic challenges—wage stagnation, healthcare costs, and mounting national debt. These programs often serve legitimate purposes, but they can also function as political tools to maintain public satisfaction and suppress dissent.

Consider how political campaigns increasingly focus on entertainment value rather than substantive policy debates. Politicians hire social media managers and appear on talk shows, understanding that capturing attention often matters more than presenting coherent governance plans. Meanwhile, voter turnout for local elections—where citizens have the most direct impact—remains dismally low.

The Distraction Economy

Perhaps most striking is how our information landscape mirrors Roman spectacles. We’re bombarded with sensational news, viral content, and manufactured controversies that generate strong emotional reactions but little productive action. Complex policy issues get reduced to soundbites and memes, making genuine democratic deliberation increasingly difficult.

Social media algorithms are specifically optimized for engagement, not enlightenment. They feed us content designed to provoke reactions—anger, outrage, schadenfreude—rather than encourage thoughtful consideration of difficult issues. This creates a population that feels politically engaged through constant consumption of political content while remaining largely passive in actual civic participation.

The danger of “bread and circuses” in modern America lies in apathy. When civic participation declines, voter turnout falls, and policy debates get reduced to simplistic slogans, elites face less scrutiny. The result is a weakened democracy, vulnerable to manipulation and short-term thinking.

Breaking the Cycle

Juvenal’s warning doesn’t mean we should abandon entertainment or social programs. Rather, it suggests we need intentional balance. Democratic societies thrive when citizens remain actively engaged in governance beyond just voting every few years.

This means staying informed about local issues, attending town halls, contacting representatives, and participating in community organizations. It means choosing substance over spectacle and long-term thinking over immediate gratification.

The Roman Republic fell partly because its citizens stopped paying attention to governance. Juvenal’s “bread and circuses” reminds us that democracy requires constant vigilance—and that comfortable distraction can be freedom’s most seductive enemy.