Recently, I’ve been looking at various political philosophies. I’ve written about fascism, totalitarianism, authoritarianism, autocracy and kleptocracy. In this post I’m going to look at theoretical communism versus the reality of Stalinist communism, and I would be remiss if I didn’t at least briefly mention oligarchy as currently practiced in Russia.

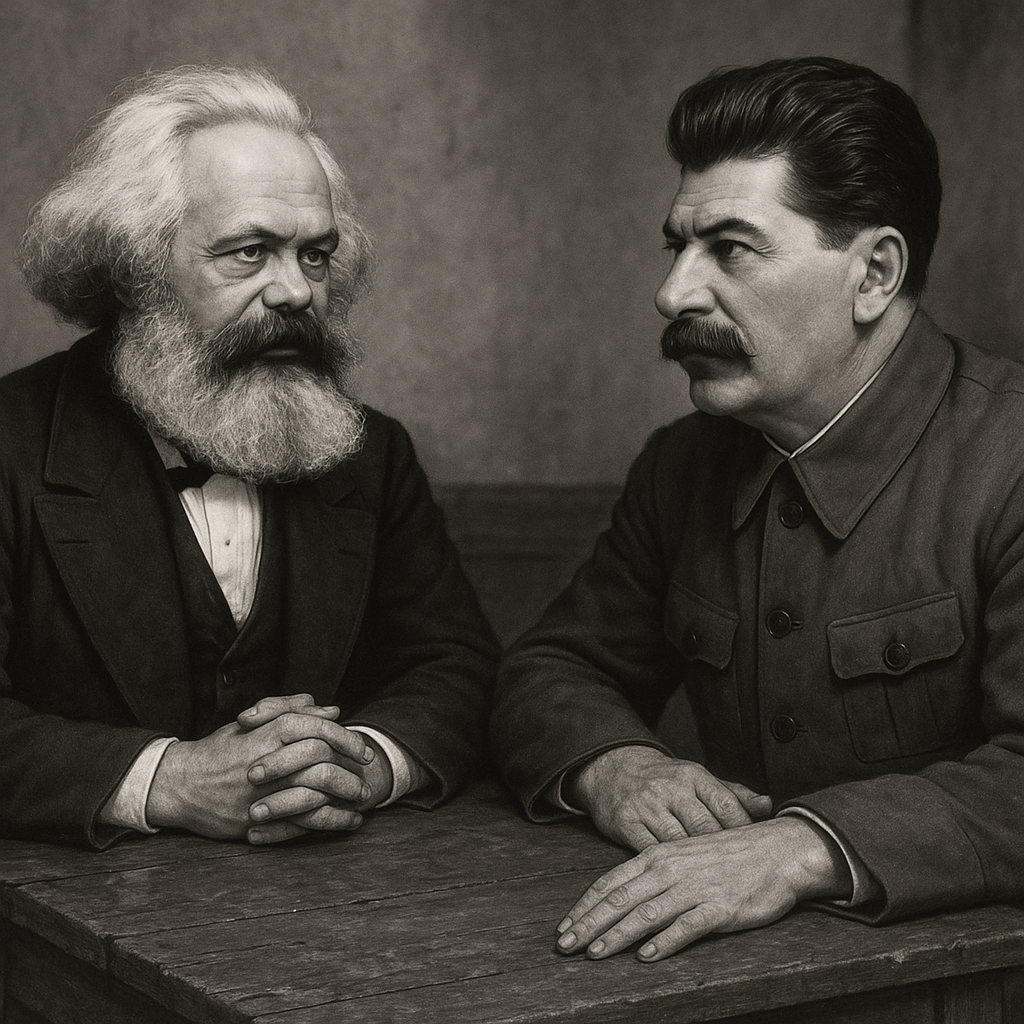

The gap between Karl Marx’s theoretical vision of communism and its implementation under Joseph Stalin’s leadership in the Soviet Union represents one of history’s most significant divergences between ideological theory and political practice. While both claimed the same ultimate goal—a classless, stateless society—their approaches and outcomes differed in fundamental ways that continue to shape our world today, one a workers’ utopia and the other a brutal dictatorship.

The Marxist Vision

Marx envisioned communism as the natural culmination of historical progress, emerging from the inherent conflicts within capitalism. In his theoretical framework, the working class (proletariat) would eventually overthrow the capitalist system through revolution, leading to a transitional socialist phase before achieving true communism. This final stage would be characterized by the absence of social classes, private property, private ownership of the means of production, and ultimately, the state itself.

Central to Marx’s concept was the idea that communism would emerge from highly developed capitalist societies where industrial production had reached its peak. He believed that the abundance created by advanced capitalism would make scarcity obsolete, allowing society to operate according to the principle “from each according to his ability, to each according to his needs.” The state, having served its purpose as an instrument of class rule, would simply “wither away” as class distinctions disappeared.

Marx also emphasized that the transition to communism would be an international phenomenon. He argued that capitalism was inherently global, and therefore its replacement would necessarily be worldwide. The famous rallying cry “Workers of the world, unite!” reflected this internationalist perspective, suggesting that communist revolution would spread across national boundaries as workers recognized their common interests.

The Stalinist Implementation

Vladimir Lenin took firm control of Russia following the revolution in 1917 and oversaw creation of a state that was characterized by centralization, suppression of opposition parties, and the establishment of the Cheka (secret police) to enforce party rule. Economically, Lenin’s government shifted from War Communism (state control of production during the civil war) to the New Economic Policy (NEP) in 1921, which allowed limited private trade and small-scale capitalism to stabilize the economy. It formally became the Union of Soviet Socialist Republics in 1922. This period provided the groundwork for the highly centralized, totalitarian state under Stalin that followed Lenin’s death in 1924.

Stalin’s approach to building communism in the Soviet Union diverged sharply from Marx’s theoretical blueprint. Rather than emerging from advanced capitalism, Stalin attempted to construct socialism in a largely agricultural society that had barely begun industrialization. This fundamental difference in starting conditions shaped every aspect of the Soviet experiment.

Instead of the gradual withering away of the state, Stalin presided over an unprecedented expansion of state power. The Soviet government under his leadership controlled virtually every aspect of economic and social life, from industrial production to agricultural collectivization to cultural expression. The state became not a temporary tool for managing the transition to communism, but a permanent and increasingly powerful institution that dominated all aspects of society. By the early 1930s, Joseph Stalin had centralized all power in his own hands, sidelining collective decision-making bodies like the Politburo or Soviets.

Marx emphasized rule by the proletariat giving power to all people equally. Stalin fostered a cult of personality through relentless propaganda. His image appeared on posters, statues, and in schools. History books were rewritten to credit him for Soviet successes—often erasing Lenin, Trotsky, or others. He was referred to as the “Father of Nations,” “Brilliant Genius,” and “Great Leader.” Loyalty to Stalin became more important than loyalty to the Communist Party or its ideals. The government and the economy operated at his personal direction, enforced by the secret police, censorship, executions, and mass purges of dissidents.

Stalin implemented a command economy, in which the government or central authority makes all major decisions about production, investment, pricing, and the allocation of resources, rather than leaving those choices to market forces. In this system, planners typically set production targets, control industries, and determine what goods and services will be available, often with the goal of achieving social or political objectives such as central control and rapid industrialization. This is the direct opposite of the voluntary cooperation Marx had envisioned. The forced collectivization of peasants onto government farms, rapid industrialization through five-year plans, and the use of prison labor in gulags represented a top-down model of development that contradicted Marx’s emphasis on worker empowerment and democratic participation.

Where Marx emphasized emancipation and freedom for workers, Stalinist policies involved widespread repression, political purges, forced labor camps, and censorship. Most notable is the period that came to be known as the “Great Purge,” also called the “Great Terror,” a campaign of political repression between 1936 and 1938. It involved widespread arrests, forced confessions, show trials, executions, and imprisonment in labor camps (the Gulag system). Stalin accused perceived political rivals, military leaders, intellectuals, and ordinary citizens of being disloyal or conducting “counter-revolutionary” activities. It is estimated that about 700,000 people were executed by firing squad after being branded “enemies of the people” in show trials or secret proceedings. Another 1.5 to 2 million people were arrested and sent to Gulag labor camps, prisons, or exile. Many died from overwork, malnutrition, disease, or harsh conditions.

Perhaps most significantly, Stalin abandoned Marx’s internationalist vision in favor of “socialism in one country.” This doctrine, developed in the 1920s, argued that the Soviet Union could build socialism independently of worldwide revolution. This shift not only contradicted Marx’s theoretical framework but also led to policies that prioritized Soviet national interests over international worker solidarity.

Key Contradictions

The differences between Marxist theory and Stalinist practice created several fundamental contradictions. Where Marx predicted the elimination of social classes, Stalin’s Soviet Union developed a rigid hierarchy with the Communist Party elite at the top, followed by technical specialists, workers, and peasants. This new class structure, while different from capitalist society, still involved significant inequalities in power, privilege, and access to resources.

Marx’s vision of worker control over production stood in stark contrast to Stalin’s centralized command economy. Rather than workers democratically managing their workplaces, Soviet workers found themselves subject to increasingly detailed state control over their labor. The factory became less a site of worker empowerment than a component in a vast state machine directed from Moscow.

The treatment of dissent also revealed fundamental differences. Marx believed that communism would eliminate the need for political repression as class conflicts disappeared. Stalin’s regime, however, relied extensively on surveillance, censorship, and violent suppression of opposition. The extensive use of terror against both perceived enemies and ordinary citizens contradicted Marx’s vision of a society based on cooperation and mutual benefit.

Modern Russia

At this point, I want to mention something about modern Russia and its current governmental and economic situation since the breakup of the Soviet Union.

An oligarchy is a form of government where power rests in the hands of a small number of people. These individuals typically come from similar backgrounds – they might be distinguished by wealth, family ties, education, corporate control, military influence, or religious authority. The word comes from the Greek “oligarkhia,” meaning “rule by few.” In an oligarchy, this small group makes the major political and economic decisions that affect the entire population, often prioritizing their own interests over those of the broader society.

Modern Russia’s economy is often described as having oligarchic features because a relatively small group of wealthy business leaders—many of whom made their fortunes during the chaotic privatization of the 1990s—maintain outsized influence over key industries like energy, banking, and natural resources. While Russia is technically a mixed economy with both private and state involvement, political connections determine who gains access to wealth and power. This creates a system where economic opportunity is concentrated among elites closely tied to the Kremlin, most closely resembling an oligarchy.

Historical Context and Consequences

Understanding the differences between Marxist theory and Stalinist implementation requires considering the historical context in which Stalin operated. The Soviet Union faced external threats, internal resistance, and the enormous challenge of rapid modernization. Stalin’s supporters argued that harsh measures were necessary to defend the revolution and build industrial capacity quickly enough to survive in a hostile international environment.

Critics, however, contend that Stalin’s methods created a system that was fundamentally incompatible with Marx’s vision of human liberation. The concentration of power in a single party—much less a single person— combined with the suppression of democratic institutions, and the extensive use of violence and coercion demonstrate that Stalinist practice moved away from, rather than toward, Marx’s goals.

The legacy of this divergence continues to influence contemporary political debates. Supporters of Marxist theory often argue that Stalin’s failures demonstrate the dangers of abandoning egalitarian principles and internationalist perspectives. Meanwhile, critics of communism point to the Soviet experience as evidence that Marxist ideals are inherently unrealistic or even dangerous.

This comparison reveals the complex relationship between political theory and practice, highlighting how historical circumstances, leadership decisions, and practical constraints can shape the implementation of ideological visions in ways that may fundamentally alter their character and outcomes.

Bread and Circuses: From Ancient Rome to Modern America

By John Turley

On September 16, 2025

In Commentary, History, Politics

“Already long ago, from when we sold our vote to no man, the People have abdicated our duties; for the People who once upon a time handed out military command, high civil office, legions — everything, now restrains itself and anxiously desires for just two things: bread and circuses.”

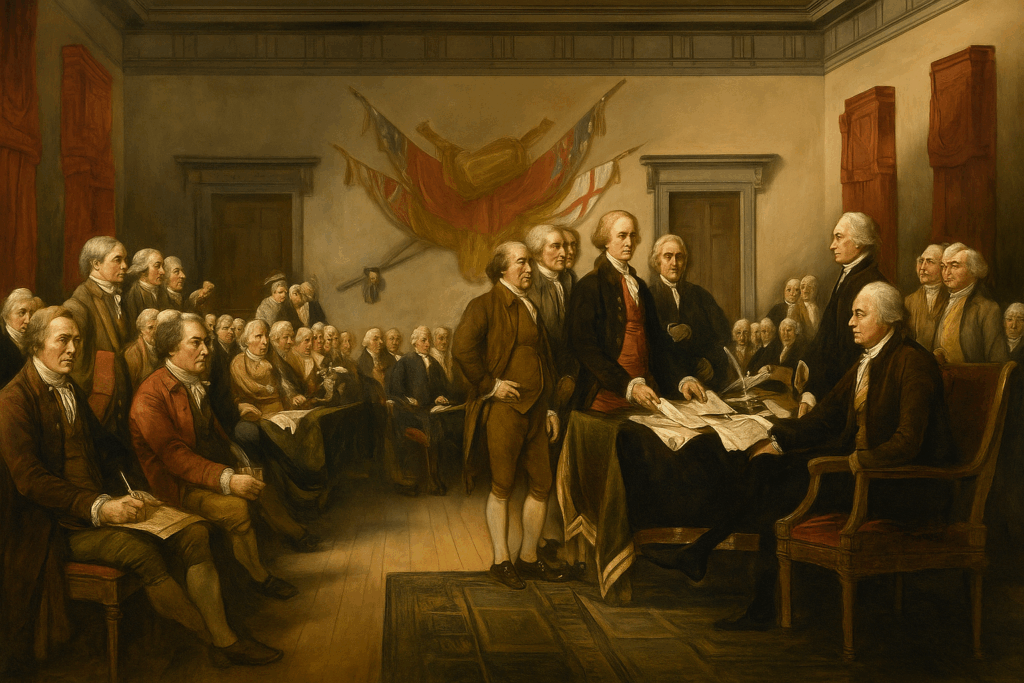

Nearly 2,000 years ago, Roman satirist Juvenal penned one of history’s most enduring political observations: “Two things only the people anxiously desire — bread and circuses.” Writing around 100 CE in his Satire X, Juvenal wasn’t celebrating this phenomenon—he was lamenting it. The poet watched as Roman citizens traded their political engagement for free grain and spectacular entertainment, becoming passive spectators rather than active participants in their democracy. The phrase has endured for nearly two millennia as shorthand for a troubling political dynamic: entertainment and consumption replacing civic engagement and accountability.

The Roman Warning

Juvenal’s critique came at a pivotal moment in Roman history. The republic had collapsed, and emperors like Augustus had systematically dismantled democratic institutions. Rather than revolt, Roman citizens seemed content as long as the government provided basic sustenance (the grain dole called annona) and elaborate spectacles at venues like the Colosseum. Political participation withered as people focused on immediate pleasures rather than long-term civic responsibilities.

The strategy worked brilliantly for Roman rulers. Keep the masses fed and entertained, and they won’t question your authority or demand meaningful representation. It was political control through distraction—a form of soft authoritarianism that maintained order without overt oppression. The policy was effective in the short term—peace in the streets and loyalty to the emperors—but disastrous over time. Rome’s population became disengaged from politics, while real power consolidated in the hands of a few.

Modern American Parallels

Fast-forward to contemporary America, and Juvenal’s observation feels uncomfortably relevant. While we don’t have gladiatorial games, we do have our own version of “circuses”—professional sports, reality TV, social media feeds, and celebrity culture that dominate public attention. These aren’t inherently problematic, but they become concerning when they crowd out civic engagement.

Our modern “bread” takes various forms: government assistance programs, subsidies, and economic policies designed to maintain consumer spending. We are saturated with cheap goods, instant delivery services, and mass consumerism. For many, economic struggles are temporarily softened by accessible consumption, from fast food to online shopping. Yet material comfort often masks deeper inequalities and systemic challenges—wage stagnation, healthcare costs, and mounting national debt. These programs often serve legitimate purposes, but they can also function as political tools to maintain public satisfaction and suppress dissent.

Consider how political campaigns increasingly focus on entertainment value rather than substantive policy debates. Politicians hire social media managers and appear on talk shows, understanding that capturing attention often matters more than presenting coherent governance plans. Meanwhile, voter turnout for local elections—where citizens have the most direct impact—remains dismally low.

The Distraction Economy

Perhaps most striking is how our information landscape mirrors Roman spectacles. We’re bombarded with sensational news, viral content, and manufactured controversies that generate strong emotional reactions but little productive action. Complex policy issues get reduced to soundbites and memes, making genuine democratic deliberation increasingly difficult.

Social media algorithms are specifically optimized for engagement, not enlightenment. They feed us content designed to provoke reactions—anger, outrage, schadenfreude—rather than encourage thoughtful consideration of difficult issues. This creates a population that feels politically engaged through constant consumption of political content while remaining largely passive in actual civic participation.

The danger of “bread and circuses” in modern America lies in apathy. When civic participation declines, voter turnout falls, and policy debates get reduced to simplistic slogans, elites face less scrutiny. The result is a weakened democracy, vulnerable to manipulation and short-term thinking.

Breaking the Cycle

Juvenal’s warning doesn’t mean we should abandon entertainment or social programs. Rather, it suggests we need intentional balance. Democratic societies thrive when citizens remain actively engaged in governance beyond just voting every few years.

This means staying informed about local issues, attending town halls, contacting representatives, and participating in community organizations. It means choosing substance over spectacle and long-term thinking over immediate gratification.

The Roman Republic fell partly because its citizens stopped paying attention to governance. Juvenal’s “bread and circuses” reminds us that democracy requires constant vigilance—and that comfortable distraction can be freedom’s most seductive enemy.