Understanding Classical Socialism, Democratic Socialism, and Social Democracy in Today’s America

If you’ve ever wondered what politicians really mean when they throw around words like “socialism” or “social democracy,” you’re not alone. These ideas used to live mostly in political theory textbooks. Now they show up in campaign speeches and social media debates. With figures like Bernie Sanders and groups like the Democratic Socialists of America bringing these ideas into the mainstream, it’s worth sorting out what each actually means.

Even though classical socialism, democratic socialism, and social democracy all claim to focus on fairness and reducing inequality, they take very different routes to get there. Understanding those differences helps make sense of what’s really being argued about in American politics today.

Classical Socialism: The Original Blueprint

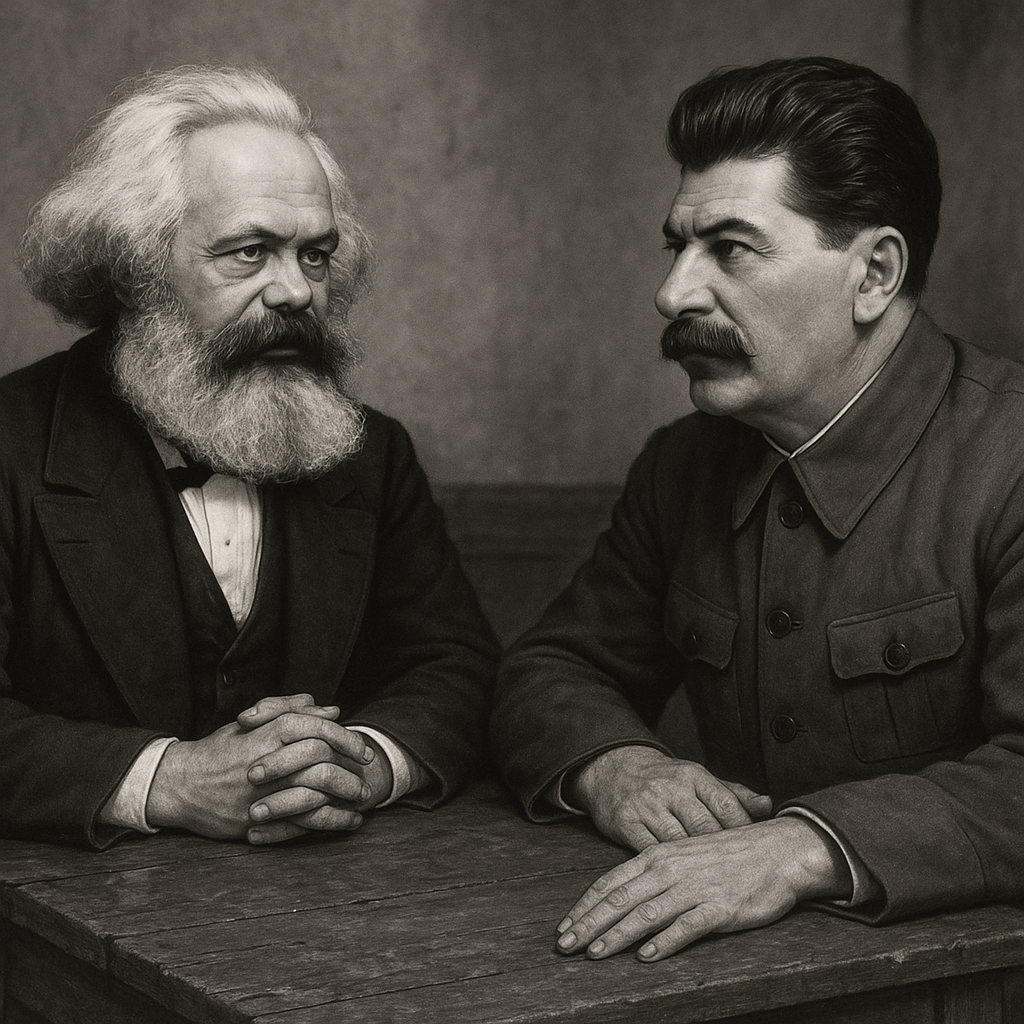

Classical socialism came out of the 19th century, when industrial capitalism was grinding workers down and a couple of guys named Karl Marx and Friedrich Engels thought they had the fix. Their idea: workers should collectively own and control the means of production — factories, land, and major industries.

This wasn’t just about taxing the rich. It was about redesigning the whole system from the ground up, through violent revolution if necessary. In theory, private property creates exploitation; collective ownership ends it. In practice, that often means top-down control by the state, with economies planned from above — as seen in the Soviet Union or Maoist China.

The central ideas of classical socialism are collective ownership of big industries and central or cooperative planning instead of market competition. Production is aimed at meeting needs, not profits with the eventual goal of a classless, stateless society. Classical socialism accepts that revolution will most likely be necessary for implementation.

In theory, classical socialism wipes out worker exploitation and wealth extremes. Its central tenant is that production serves human needs, not corporate profit. In practice, it often leads to authoritarian governments, clumsy economic planning, and little room for innovation or dissent.

Would it work in America?

Probably not. The U.S. has deep cultural roots in individualism and private enterprise. Replacing markets with centralized planning would clash hard with both our Constitution and national temperament.

The Siblings of Socialism

In the real world, classical socialism has produced two offsprings, the confusingly named democratic socialism and social democracy. While they share many similarities, the major difference is that democratic socialism aims to replace capitalism while social democracy has the objective of reforming capitalism and making it more humane.

Democratic socialism

Democratic socialism shares many of classical socialism’s goals but emphasizes getting there through elections — not revolution. It aims to establish central control of key parts of the economy while protecting some political freedom and most civil rights.

The vision of Democratic Socialism is collective (public) ownership of major industries like energy, transportation, manufacturing, and communications. The economy would be directed and managed by the government, but the government would be elected and it would not be an authoritarian state. It proposes that within individual industries there would be worker self-management and workplace democracy. It also proposes that there would be private sector businesses allowed on a small scale—think Mom and Pop retail. It supposes gradual reform, not a violent upheaval, while maintaining democracy and civil liberties.

There are several major drawbacks to democratic socialism. Progress can be slow, easily reversed, and still subject to bureaucratic inefficiencies. Competing globally with capitalist economies might also prove tough. To me the major drawback is how major corporations, financial institutions, and wealthy businesspeople can be convinced to peacefully hand over control of major portions of the economy to a “people’s collective”.

How it fits in the U.S.:

Democratic Socialism has grown in popularity, especially among younger voters; although, it seems that many younger people seem to believe that this means making things more fair rather than supporting the reality of Democratic Socialism.

Bernie Sanders and Alexandria Ocasio-Cortez wear the label proudly. Still, the idea of government control of a significant portion of the economy faces serious resistance here. Realistically, it’s more a movement that nudges policy leftward than a model ready for prime time.

Social Democracy: Capitalism with Guardrails

Social democracy takes a different track. It doesn’t want to abolish capitalism — it wants to civilize it. Think Scandinavia: private ownership, strong markets, but also universal healthcare, paid leave, and free college.

The central elements of Social Democracy are a mixed economy with both public and private sector control. In some models, there is direct government management of such public services as healthcare, energy and transportation. In other models, there remains private control of these services with a strong regulation on the part of the government.

Regardless of the chosen model, a Social Democracy is a strong welfare state with universal benefits. The definition of welfare in this context is a way of providing earned support for hard working citizens Perhaps it should be called an earned benefits state as the term welfare has a pejorative implication for some.

There is strong market regulation to prevent unfair competition, price gouging, and monopolies that are detrimental to public good. There is a progressive tax program designed to reward productivity while heavily taxing passive or nonproductive income. These taxes are used to fund generous public services.

The government remains elective and responsive to the public. It’s proven to work. Nordic countries show that capitalism can coexist with equality and innovation. While it is expensive, and high taxes can be a political lightning rod, it leaves capitalism’s basic structure intact. There is a constant risk that inequality can creep back if protection weaken.

In the U.S. context:

Social democracy may be the most realistic option. As social scientist Lane Kenworthy puts it, America already is a social democracy — just not a particularly generous one. We’ve got Medicare, Social Security, public education — we just underfund them compared to our European cousins. The reality is that income lost to increased taxation is regained through decreases in insurance premiums, healthcare costs, education expenses and retirement expenses.

With Elon Musk on the cusp of becoming the world’s first trillionaire we have to ask: “How much is enough before they accept their social responsibility to the working people that made their wealth possible?” The bottom line is that when the ultra-wealthy are required to pay their fair share of taxes, public services become affordable. We should be supporting people, not yachts.

What’s Realistically Possible Here?

Culturally, Americans value freedom, competition, and property rights. Yet polls show younger voters are warming up to “socialism,” even if most don’t seem to be clear about the specifics. Institutionally, the U.S. political system makes sweeping change tough. Our winner-take-all elections favor a two-party system that leaves little room for socialist parties to grow independently.

Democratic Socialism may continue to shape the conversation, but full socialism — especially the classic Marxist kind — is not likely to take hold here. From my perspective, the most realistic option, Social Democracy is too often overlooked in these discussions.

Given that, the path of least resistance looks like expanded Social Democracy: things like a revised and equitable tax code, universal healthcare, free or subsidized higher education, paid family leave, stronger labor laws, and public investment in infrastructure and green energy.

Social Democracy looks like the most attainable path — not a revolution, but an evolution toward a fairer society. Only time will tell.

How A Nobel Laureate Thinks We Can Save The American Economy…But It Won’t Be Easy

By John Turley

On October 19, 2025

In Commentary, Politics

I just finished People, Power, and Profits by Joseph Stiglitz — the Nobel Prize winning economist. He wrote this near the end of Trump’s first term, but honestly, the world he describes feels even more relevant now.

Stiglitz doesn’t sugarcoat it: capitalism, as we’re practicing it today, is broken. Monopolies dominate markets, inequality has gone wild, and trust in democracy is running on fumes. His proposed fix? Something he calls progressive capitalism — capitalism with guardrails, conscience, and a sense of fairness.

Stiglitz makes the case that our economic system is rigged — not by accident, but by design. Here are his most compelling arguments and what he thinks we should do about them.

1. Taxation and Rent-Seeking: The Rigged Game

Stiglitz draws a sharp distinction between making money through productive work and extracting it through what economists call “rent-seeking” – essentially, using power to skim wealth without creating value. Think of a pharmaceutical company that buys a drug patent and jacks up prices 5,000%, or telecom monopolies that divide up markets to avoid competing.

His argument is straightforward: our tax system rewards the wrong behavior. Capital gains are taxed at lower rates than wages, which means someone living off investments pays less than someone working a regular job. Meanwhile, the wealthy can afford armies of accountants to exploit loopholes that most people don’t even know exist.

What Stiglitz recommends: Tax wealth more aggressively, especially inherited wealth. Close the capital gains loophole. Tax rent-seeking activities heavily while reducing taxes on productive work and innovation. The goal isn’t just revenue – it’s changing incentives so that the path to riches runs through creating value, not extracting it.

2. Green Energy and the True Cost of Pollution

Here’s where Stiglitz gets into what economists call “externalities” – costs that businesses impose on society without paying for them. When a coal plant spews carbon into the atmosphere, we all pay through climate change and increased healthcare costs, but the plant’s balance sheet looks great.

Stiglitz argues this is fundamentally dishonest accounting. If we properly priced pollution and carbon emissions, green energy wouldn’t need subsidies to compete – fossil fuels would suddenly look much more expensive once you factor in their real costs to society.

His recommendation: Implement carbon pricing – either through a carbon tax or cap-and-trade system. Make polluters pay for the damage they cause. This isn’t about punishing business; it’s about honest accounting. Once prices reflect reality, the market will naturally shift toward cleaner energy because it’s actually cheaper when you account for all the costs.

3. Big Business and Big Banks: Concentration of Power

Stiglitz has been particularly vocal about how corporate consolidation hurts everyone except shareholders and executives. His critique of “too big to fail” is sharp. He argues that concentrated economic power — in tech, finance, and even agriculture — undermines both democracy and efficiency. When a few firms dominate markets, they can suppress wages, block innovation, and bend regulations in their favor—they gain power over prices, wages, and even politics.

The banking sector especially concerns him. After the 2008 financial crisis, which was caused largely by reckless behavior from major banks, these same institutions emerged even larger through government-facilitated mergers. We allowed them to spread their losses among their depositors but let them keep their gains as internal profits.

His recommendations: Reinstate and strengthen regulations that were stripped away, including bringing back something like the Glass-Steagall Act that separated commercial and investment banking. Break up banks that are “too big to fail.” Strengthen antitrust enforcement across all industries. Use the government’s regulatory power to promote competition rather than letting industry consolidate.

4. Money in Politics: The Feedback Loop

This is where everything connects for Stiglitz. Concentrated economic power translates directly into political power. Wealthy interests fund campaigns, lobby relentlessly, and effectively write regulations for the agencies that are supposed to oversee them. This creates a vicious cycle: economic inequality begets political inequality, which creates policies that worsen economic inequality.

Stiglitz argues that the Supreme Court’s Citizens United decision, which allowed unlimited corporate spending in elections, turbocharged this problem by treating money as speech and corporations as people.

His recommendations: Limit campaign spending and institute public financing of campaigns to reduce candidates’ dependence on wealthy donors. Place strict limits on lobbying and implement a robust “revolving door” policy that prevents government officials from immediately cashing in with the industries they regulated. Mandate transparency requirements so voters know who’s funding what. Pass Constitutional amendments if necessary to overturn Citizens United.

The Big Picture

What makes Stiglitz’s argument powerful is how these pieces fit together. You can’t fix inequality just through taxation if big corporations control the political process. You can’t address climate change if fossil fuel companies can buy enough influence to block action. Everything is connected.

His recommendations aren’t radical in historical terms – they’re actually trying to restore a balance that existed during the post-war economic boom of the 1950s. Stiglitz’s “progressive capitalism” isn’t socialism. It’s capitalism with a conscience — one that remembers who it’s supposed to serve.

Whether you see that as a rescue plan or a recipe for red tape depends entirely on where you put your faith: in public institutions or private markets. The question is do we have the political will to implement his recommendation despite entrenched opposition from those benefiting from the current system?

Either way, this debate isn’t going away — it’s the one shaping the 21st-century economy.