Not long ago I was watching a news show and one of the panelists started talking about “a duel of words” that went on in a congressional hearing. I was intrigued by the use of the word duel and I thought I’d look into the history of this strange custom.

In the age before Twitter feuds, internet trolling, and legal settlements, honor was defended with pistols at dawn. The Code Duello, a set of rules governing dueling, offers a fascinating glimpse into how ideas of masculinity, reputation, and justice shaped public and private life in the Anglo-American world from the mid-18th century through the antebellum era.

The Code Duello emerged as one of the most distinctive and controversial aspects of genteel culture in the American colonies in the early United States. This elaborate system of honor-based combat, imported from European aristocratic traditions, would profoundly shape American society between 1750 and 1860, creating a culture where personal honor often trumped legal authority and where violence became a sanctioned means of dispute resolution among the elite.

European Origins

The Code Duello originated in Renaissance Italy and spread throughout European aristocratic circles as a means of settling disputes while maintaining social hierarchy. The practice reached the American colonies through British and Continental European settlers who brought with them deeply ingrained notions of honor, reputation, and gentlemanly conduct. Unlike random violence or brawling, dueling operated under strict protocols that emphasized courage, skill, and adherence to prescribed rituals.

The most influential codification was the Irish Code Duello of 1777, written by gentlemen of Tipperary and Galway. This twenty-six-rule system established procedures for issuing challenges, selecting weapons, determining conditions of combat, and defining acceptable outcomes. The code emphasized that dueling was a privilege of gentlemen, requiring both participants to be of equal social standing and ensuring that honor could only be satisfied through formal, regulated combat.

Colonial Implementation and Adaptation

The first recorded American duel occurred in 1621 in Plymouth, Massachusetts, between two servants, but the practice soon became the exclusive domain of elites as only “gentlemen” were considered to possess honor worth defending in this way.

The Irish Code Duello was widely adopted in America, though often with local variations. In 1838, South Carolina Governor John Lyde Wilson published an “Americanized” version, known as the Wilson Code, which further codified the practice for the southern states and attempted to increase negotiated settlements. These codes served as the de facto law of honor, even as formal legal systems struggled to suppress dueling.

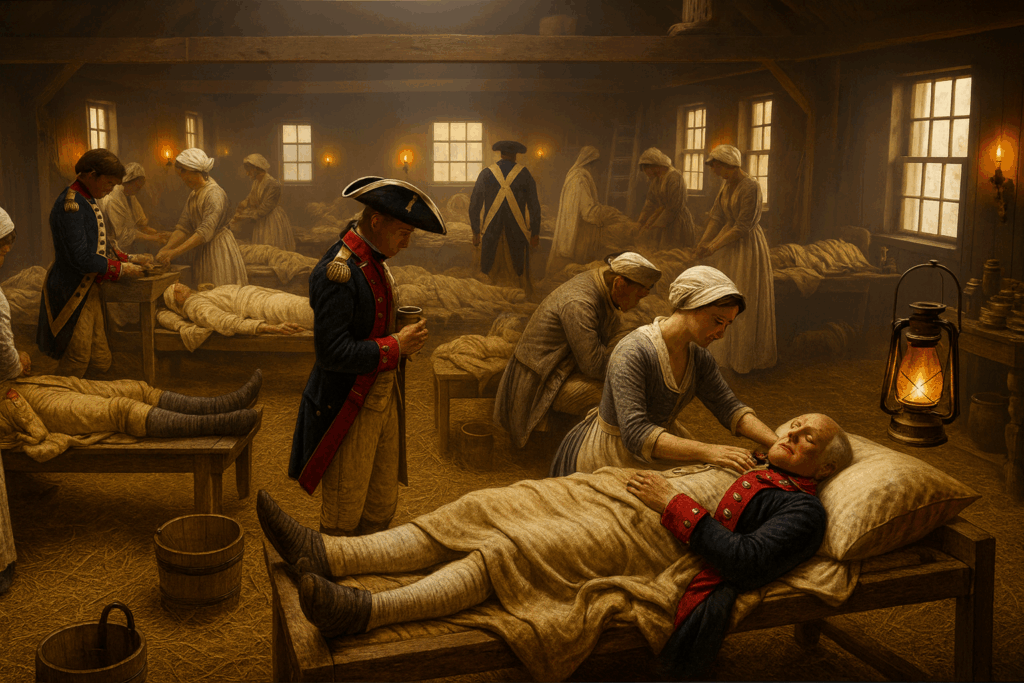

The practice gained prominence among the southern plantation society’s hierarchy as dueling fit well with its emphasis on personal honor. The ritual was highly formal: challenges were issued in writing, seconds (assistants to the duelists) attempted to mediate, the weapons chosen, and terms were carefully negotiated.

Colonial dueling adapted European practices to American circumstances. While European duels often involved swords, reflecting centuries of aristocratic martial tradition, American duelists increasingly favored pistols, which were more readily available and required less specialized training. This shift democratized dueling to some extent, as pistol proficiency was more easily acquired than swordsmanship, though the practice remained largely restricted to the upper classes.

The Revolutionary War significantly expanded dueling’s influence. Military service brought together men from different regions and social backgrounds, spreading dueling customs beyond their original geographic and social boundaries. Officers who had learned European military traditions during the conflict carried these practices into civilian life, establishing dueling as a marker of martial virtue and gentlemanly status.

The Early Republic

Following independence, dueling became increasingly institutionalized in American society. The young republic’s political culture, characterized by intense partisan conflict and personal attacks in newspapers, created numerous opportunities for perceived slights to honor that demanded satisfaction through combat.

The most famous American duel occurred in 1804 when Aaron Burr killed Alexander Hamilton at Weehawken, New Jersey. This encounter exemplified both the power and the contradictions of dueling culture. Hamilton, despite philosophical opposition to dueling, felt compelled to accept Burr’s challenge to maintain his political viability. The duel’s outcome effectively ended Burr’s political career and demonstrated how adherence to the code could destroy the very honor it purported to defend.

Prior to becoming president, Andrew Jackson took part in at least three duels, although he is rumored to have been in many more. In his most famous duel, Jackson shot and killed a man who had insulted his wife. Jackson was also wounded in the duel and carried the bullet in his chest for the rest of his life.

Political dueling reached epidemic proportions in the antebellum period. Congressional representatives, senators, and other public figures regularly challenged opponents to combat over policy disagreements or personal insults. The practice became so common that some politicians deliberately provoked duels to enhance their reputation for courage, while others saw dueling as essential to maintaining credibility in public life.

Regional Variations and Social Dynamics

Dueling culture varied significantly across regions. The South developed the most elaborate and persistent dueling traditions, where the practice became intimately connected with concepts of honor, masculinity, and social hierarchy that would later influence Confederate military culture. Southern dueling codes often emphasized elaborate rituals and multiple exchanges of fire, reflecting a culture that viewed honor as more important than life itself.

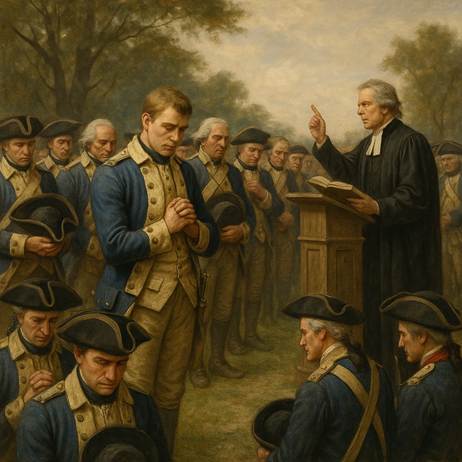

Northern attitudes toward dueling were more ambivalent. While many Northern elites participated in dueling, the practice faced stronger opposition from religious groups, legal authorities, and emerging middle-class values that emphasized commerce over honor. Anti-dueling societies formed in several Northern cities, and some states enacted specific anti-dueling legislation, though enforcement remained inconsistent. Laws against it were passed in several colonies as early as the mid-18th century, with harsh penalties including denial of Christian burial for duelists killed in combat. Clergy denounced it as un-Christian, and reformers sought to eradicate it, but the practice persisted, especially in regions where courts were weak or social hierarchies unstable. The South, with its less institutionalized markets and governance, saw dueling as a quicker, more reliable way to settle disputes.

Western frontier regions adapted dueling to their own circumstances, often emphasizing practical marksmanship over elaborate ceremony. Frontier dueling tended to be less formal than Eastern practices, but it served similar functions in establishing social hierarchies and resolving disputes in areas where legal institutions remained weak.

Decline and Legacy

By the 1850s, dueling faced increasing opposition from legal, religious, and social reform movements. The rise of professional journalism, which could destroy reputations without resort to violence, provided alternative means of defending honor. Changing economic conditions that emphasized commercial success over martial virtue gradually undermined dueling’s social foundations.

The Civil War marked dueling’s effective end as a significant social institution. The massive scale of organized violence made individual combat seem anachronistic, while post-war society increasingly emphasized industrial progress over aristocratic honor. Though isolated duels continued into the 1870s, the practice lost its central role in American elite culture.

The Code Duello’s legacy extended far beyond its formal practice. It established patterns of violence, honor, and masculine identity that would influence American culture for generations, contributing to regional differences in attitudes toward violence and honor that persist today. The code’s emphasis on individual resolution of disputes also reflected broader American skepticism toward institutional authority, helping shape a culture that often preferred private justice to public law.

How the Code Duello Shaped Western Gunfighting Culture

The Code Duello was a script for settling personal disputes through controlled violence. Its influence waned in the East by the mid-1800s, but many of its ideas persisted, especially among military veterans, Southern transplants, and frontiersmen. As the American frontier expanded, the ethic of “settling scores” through personal combat found fertile ground in the west. What changed was the style and setting.

From Pistols at Dawn to High Noon

In the Code Duello, challenges were typically issued in writing, often with formal language and designated seconds. A duel was planned, often days in advance, and fought with flintlock pistols or swords. By contrast, gunfights in the Old West were more spontaneous, often provoked by insults, cheating, or long-standing feuds. Still, both forms were ultimately about defending personal honor in public view.

Gunfighters like Wild Bill Hickok and Wyatt Earp became mythologized partly because they embodied an honor-based culture in an environment where the law was weak or slow. In many ways, the Western gunfight was an informal, democratized version of the Code Duello, stripped of its aristocratic pretenses but keeping its emotional and symbolic core.

Myth vs. Reality

Ironically, formal duels were relatively rare in the actual Old West, and many “gunfights” were closer to ambushes or drunken brawls than ritualized combat. But dime novels, Wild West shows, and later Hollywood films reimagined them using a Code Duello-like template: two men meet face to face, in broad daylight, to resolve a conflict through a test of nerve and skill. The image of the high-noon shootout—with a silent crowd, an agreed time and place, and an implied code of fairness—is the Code Duello in cowboy boots, but it likely never existed.

The Duel That Never Was

I will end the discussion of Code Duello with what may be one of the most unusual of all American dueling stories.

In 1842, Abraham Lincoln became embroiled in a public dispute with James Shields, the auditor of Illinois, largely over Illinois State banking policy and some satirical letters that mocked Shields. Shields took great offense to these attacks—particularly the ones written by Lincoln under the pseudonym “Rebecca”—and formally challenged Lincoln to a duel. According to the rules of dueling, Lincoln, as the one challenged, had the right to choose the weapons. He selected cavalry broadswords of the largest size to take advantage of his own height and reach over Shields.

The Duel’s Outcome

The duel was scheduled for September 22, 1842, on Bloody Island, a sandbar in the Mississippi River near Alton, Missouri—chosen because dueling was still legal there. On the day of the duel, before any blood was shed, Lincoln dramatically demonstrated his advantage by slicing off a high tree branch with his broadsword, showcasing his reach and physical prowess. After witnessing this and following subsequent negotiations by their seconds, Shields and Lincoln decided to call off the duel, resolving their differences without violence.

Legacy

Although the duel never resulted in violence, it became a notorious episode in Lincoln’s life, one he rarely spoke of later, even when asked about it. The event is commonly cited as a reflection of Lincoln’s quick wit, physical presence, and preference for peaceful resolution when possible. While Abraham Lincoln never actually fought a duel, he was briefly a participant in one of the more colorful near-duels of American political history.

A Final Thought

Perhaps the world would be a better place if we reinstitute some elements of Code Duello and instead of sending armies off to fight bloody battles, the national leaders settle disputes by individual combat. I suspect there would be many more negotiated settlements.

How A Nobel Laureate Thinks We Can Save The American Economy…But It Won’t Be Easy

By John Turley

On October 19, 2025

In Commentary, Politics

I just finished People, Power, and Profits by Joseph Stiglitz — the Nobel Prize winning economist. He wrote this near the end of Trump’s first term, but honestly, the world he describes feels even more relevant now.

Stiglitz doesn’t sugarcoat it: capitalism, as we’re practicing it today, is broken. Monopolies dominate markets, inequality has gone wild, and trust in democracy is running on fumes. His proposed fix? Something he calls progressive capitalism — capitalism with guardrails, conscience, and a sense of fairness.

Stiglitz makes the case that our economic system is rigged — not by accident, but by design. Here are his most compelling arguments and what he thinks we should do about them.

1. Taxation and Rent-Seeking: The Rigged Game

Stiglitz draws a sharp distinction between making money through productive work and extracting it through what economists call “rent-seeking” – essentially, using power to skim wealth without creating value. Think of a pharmaceutical company that buys a drug patent and jacks up prices 5,000%, or telecom monopolies that divide up markets to avoid competing.

His argument is straightforward: our tax system rewards the wrong behavior. Capital gains are taxed at lower rates than wages, which means someone living off investments pays less than someone working a regular job. Meanwhile, the wealthy can afford armies of accountants to exploit loopholes that most people don’t even know exist.

What Stiglitz recommends: Tax wealth more aggressively, especially inherited wealth. Close the capital gains loophole. Tax rent-seeking activities heavily while reducing taxes on productive work and innovation. The goal isn’t just revenue – it’s changing incentives so that the path to riches runs through creating value, not extracting it.

2. Green Energy and the True Cost of Pollution

Here’s where Stiglitz gets into what economists call “externalities” – costs that businesses impose on society without paying for them. When a coal plant spews carbon into the atmosphere, we all pay through climate change and increased healthcare costs, but the plant’s balance sheet looks great.

Stiglitz argues this is fundamentally dishonest accounting. If we properly priced pollution and carbon emissions, green energy wouldn’t need subsidies to compete – fossil fuels would suddenly look much more expensive once you factor in their real costs to society.

His recommendation: Implement carbon pricing – either through a carbon tax or cap-and-trade system. Make polluters pay for the damage they cause. This isn’t about punishing business; it’s about honest accounting. Once prices reflect reality, the market will naturally shift toward cleaner energy because it’s actually cheaper when you account for all the costs.

3. Big Business and Big Banks: Concentration of Power

Stiglitz has been particularly vocal about how corporate consolidation hurts everyone except shareholders and executives. His critique of “too big to fail” is sharp. He argues that concentrated economic power — in tech, finance, and even agriculture — undermines both democracy and efficiency. When a few firms dominate markets, they can suppress wages, block innovation, and bend regulations in their favor—they gain power over prices, wages, and even politics.

The banking sector especially concerns him. After the 2008 financial crisis, which was caused largely by reckless behavior from major banks, these same institutions emerged even larger through government-facilitated mergers. We allowed them to spread their losses among their depositors but let them keep their gains as internal profits.

His recommendations: Reinstate and strengthen regulations that were stripped away, including bringing back something like the Glass-Steagall Act that separated commercial and investment banking. Break up banks that are “too big to fail.” Strengthen antitrust enforcement across all industries. Use the government’s regulatory power to promote competition rather than letting industry consolidate.

4. Money in Politics: The Feedback Loop

This is where everything connects for Stiglitz. Concentrated economic power translates directly into political power. Wealthy interests fund campaigns, lobby relentlessly, and effectively write regulations for the agencies that are supposed to oversee them. This creates a vicious cycle: economic inequality begets political inequality, which creates policies that worsen economic inequality.

Stiglitz argues that the Supreme Court’s Citizens United decision, which allowed unlimited corporate spending in elections, turbocharged this problem by treating money as speech and corporations as people.

His recommendations: Limit campaign spending and institute public financing of campaigns to reduce candidates’ dependence on wealthy donors. Place strict limits on lobbying and implement a robust “revolving door” policy that prevents government officials from immediately cashing in with the industries they regulated. Mandate transparency requirements so voters know who’s funding what. Pass Constitutional amendments if necessary to overturn Citizens United.

The Big Picture

What makes Stiglitz’s argument powerful is how these pieces fit together. You can’t fix inequality just through taxation if big corporations control the political process. You can’t address climate change if fossil fuel companies can buy enough influence to block action. Everything is connected.

His recommendations aren’t radical in historical terms – they’re actually trying to restore a balance that existed during the post-war economic boom of the 1950s. Stiglitz’s “progressive capitalism” isn’t socialism. It’s capitalism with a conscience — one that remembers who it’s supposed to serve.

Whether you see that as a rescue plan or a recipe for red tape depends entirely on where you put your faith: in public institutions or private markets. The question is do we have the political will to implement his recommendation despite entrenched opposition from those benefiting from the current system?

Either way, this debate isn’t going away — it’s the one shaping the 21st-century economy.