You’ve probably heard the whispers—the Freemasons secretly controlled the American Revolution, George Washington wore a special apron, and there’s a hidden pyramid on the dollar bill. It’s the kind of thing that sounds like it came straight from a Nicolas Cage movie. But like most historical legends, the real story is more interesting (and less conspiratorial) than the mythology.

So, what’s the actual deal with Freemasons and America’s founding? Let’s dig in.

What Even Is Freemasonry?

First things first: Freemasonry started out as actual stonemasons’ guilds back in medieval Europe—think guys who built cathedrals sharing trade secrets. But by the early 1700s, it had transformed into something completely different: a philosophical club where educated men gathered to discuss big ideas about morality, reason, and how to be better humans.

The secrecy? That was part of the appeal. Lodges had rituals and passwords, sure, but the core values weren’t exactly hidden. Freemasons were all about Enlightenment thinking—liberty, equality, the pursuit of knowledge. Basically, the kind of stuff that gets you excited if you’re the type who actually enjoys reading philosophy books.

In colonial America, joining a Masonic lodge was a bit like joining an elite networking group today, except instead of swapping business cards, you discussed natural rights and wore fancy aprons. Lawyers, merchants, printers—the educated professional class—flocked to lodges for both the intellectual stimulation and the social connections.

The Founding Fathers: Who Was Actually In?

Let’s separate fact from fiction when it comes to which founders were card-carrying Masons.

Definitely Masons:

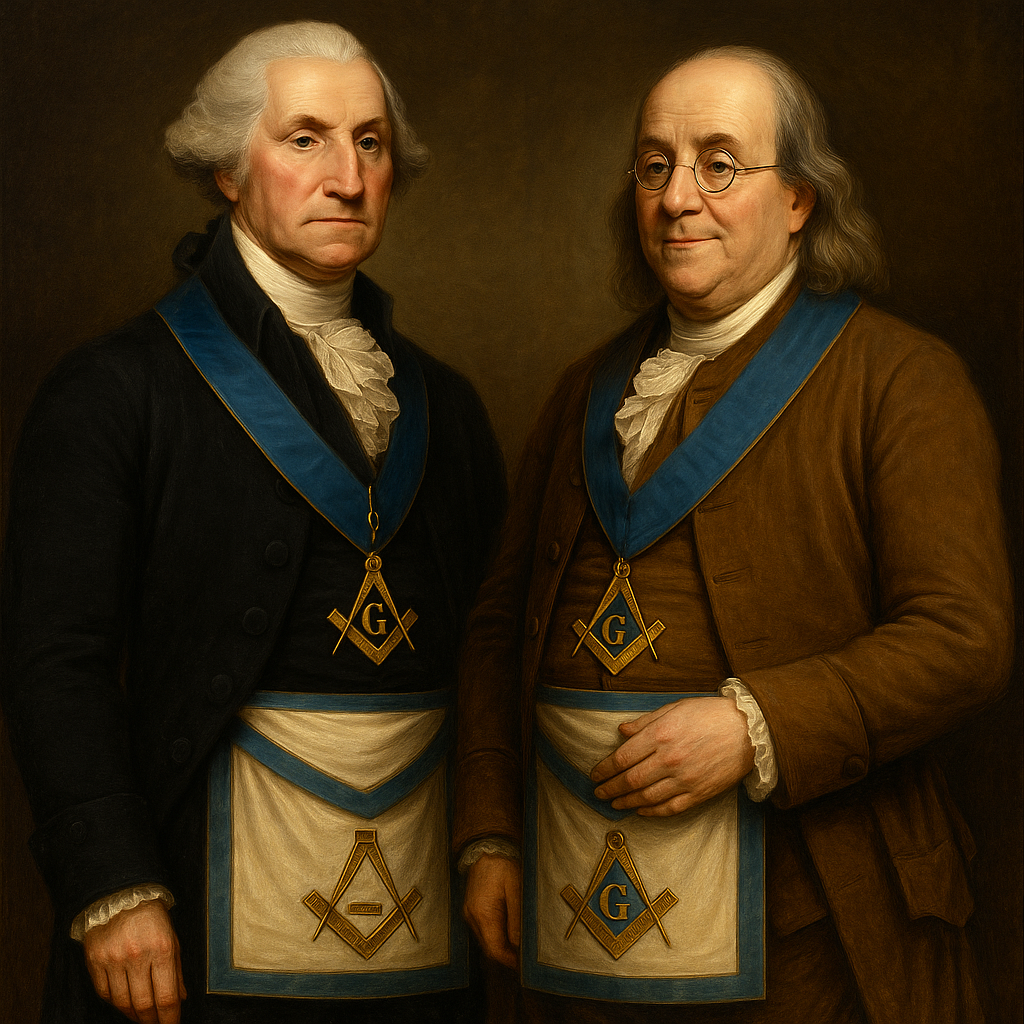

George Washington became a Master Mason at 21 in 1753. He wasn’t the most active member—he didn’t attend meetings constantly—but he took it seriously enough to wear his Masonic apron when he laid the cornerstone of the U.S. Capitol in 1793. That’s a pretty public endorsement.

Benjamin Franklin was perhaps the most dedicated Mason among the founders. Initiated in 1731, he eventually became Grand Master of Pennsylvania’s Grand Lodge and helped establish lodges in France during his diplomatic stint. Franklin was basically the poster child for Enlightenment Masonry.

Paul Revere—yes, that Paul Revere—was Grand Master of Massachusetts. His midnight ride gets all the attention, but his Masonic connections were just as important to his Revolutionary activities.

John Hancock also served as Grand Master of Massachusetts. His oversized signature on the Declaration was matched by his outsized commitment to Masonic ideals.

John Marshall, the Chief Justice who shaped American constitutional law, was a dedicated Mason. So was James Monroe, the fifth president.

Here’s a fun stat: of the 56 signers of the Declaration of Independence, at least nine (about 16%) were Masons. Among the 39 who signed the Constitution, roughly thirteen (33%) belonged to the fraternity.

The Maybes:

Thomas Jefferson? Probably not a Mason, despite endless conspiracy theories. There’s no solid evidence of membership, though his Enlightenment philosophy certainly sounded Masonic. His buddy the Marquis de Lafayette was definitely in, which hasn’t helped dispel the rumors.

Alexander Hamilton? The evidence is murky. Some historians think his writings hint at Masonic sympathies, but there’s no membership record.

Definitely Not:

John Adams wasn’t a Mason and was actually skeptical of secret societies. He still believed in many of the same principles, though—virtue, republican government, that sort of thing.

Did the Masons Really Influence the Revolution?

Here’s where it gets interesting. No, the Freemasons didn’t sit around a lodge plotting revolution like some shadowy cabal. But did their ideas and networks matter? Absolutely.

Think about what Masonic lodges provided: a space where educated colonists could meet, discuss radical ideas about natural rights and self-governance, and build trust across colonial boundaries—all without British officials breathing down their necks. These lodges brought together men from different colonies, different religious backgrounds (Anglicans, Quakers, Deists), and different social classes.

The radical part? Inside a lodge, everyone met “on the level.” It didn’t matter if you were born rich or poor—merit and virtue determined your standing. That’s pretty revolutionary thinking in the 1700s when most of the world still believed some people were just born better than others. Sound familiar? “All men are created equal” has a similar ring to it.

Freemasonry also championed religious tolerance. You had to believe in some kind of Supreme Being, but that was it—no specific creed required. This ecumenical approach directly influenced the founders’ commitment to religious freedom and separation of church and state.

The Masonic motto about moving “from darkness to light” through knowledge wasn’t just ritualistic mumbo-jumbo. It reflected genuine Enlightenment belief in reason and progress—the same intellectual current that powered revolutionary thinking.

What About All That Symbolism?

Okay, let’s address the pyramid and the all-seeing eye on the dollar bill. Are they Masonic? Maybe, maybe not. The Great Seal of the United States definitely uses imagery that Masons also used—but so did lots of 18th-century groups drawing on Enlightenment and classical symbolism. The connection is debated among historians.

What’s undeniable is that Masonic culture emphasized architecture and building as metaphors for constructing a just society. When Washington laid that Capitol cornerstone in his Masonic apron, he was making a statement about building something enduring and meaningful.

The “Conspiracy” Question

Let’s be clear: there was no Masonic conspiracy to create America. The fraternity wasn’t even unified—lodges operated independently, and members included both patriots and loyalists. Officially, Masonic organizations tried to stay neutral during the Revolution, though obviously that didn’t work out perfectly when the war split families and communities.

What is true is that many of the Revolution’s most articulate, influential leaders happened to be Masons. And the fraternity’s values—liberty, equality, reason, fraternity—aligned perfectly with revolutionary ideology. Correlation, not conspiracy.

After the Revolution, Freemasonry exploded in popularity. It became associated with the Enlightenment values that had supposedly won the day. Future presidents including Andrew Jackson, James Polk, and Theodore Roosevelt were all Masons. At its 19th-century peak, an estimated one in five American men belonged to a lodge.

What’s the Bottom Line?

The Freemason influence on America’s founding is real, but it’s cultural rather than conspiratorial. The lodges provided a space where Enlightenment ideas could circulate, where colonial leaders could build networks of trust, and where egalitarian principles could be practiced in miniature.

Washington, Franklin, Hancock, and the others weren’t sitting in smoke-filled rooms with secret handshakes planning to overthrow the British crown. They were part of a broader philosophical movement that valued personal improvement, moral virtue, and human rights. The Masonic lodge was one venue—among many—where those ideas took root.

Freemasonry was one tributary feeding into the river of revolutionary thought, along with classical republicanism, British common law, various religious traditions, and plain old grievances about taxes and representation.

The real story is somehow simpler and more fascinating than the conspiracy theories: a bunch of educated colonists joined a fraternity that encouraged them to think big thoughts about human nature and just governance. Those thoughts, debated in lodges and taverns and town halls, eventually sparked a revolution.

Not because of secret symbols or mysterious rituals, but because ideas about liberty and equality—once you start taking them seriously—are genuinely revolutionary.

True confession—The Grumpy Doc is not now, nor has he ever been, a Mason.

Study The Past If You Would Define The Future—Confucius

By John Turley

On December 26, 2025

In Commentary, History

I particularly like this quotation. It is similar to the more modern version: Those who don’t learn from the past are doomed to repeat it. However, I much prefer the former because it seems to be more in the form of advice or instruction. The latter seems to be more of a dire warning. Though I suspect, given the current state of the world, a dire warning is in order.

But regardless of whether it comes in the form of advice or warning, people today do not seem to heed the importance of studying the past. The knowledge of history in our country is woeful. The lack of emphasis on the teaching of history in general and specifically American history, is shameful. While it is tempting to blame it on the lack of interest on the part of the younger generation, I find people my own age also have little appreciation of the events that shaped our nation, the world and their lives. Without this understanding, how can we evaluate what is currently happening and understand what we must do to come together as a nation and as a world.

I have always found history to be a fascinating subject. Biographies and nonfiction historical books remain among my favorite reading. In college I always added one or two history courses every semester to raise my grade point average. Even in college I found it strange that many of my friends hated history courses and took only the minimum. At the time, I didn’t realize just how serious this lack of historical perspective was to become.

Several years ago I became aware of just how little historical knowledge most people had. At the time Jay Leno was still doing his late-night show and he had a segment called Jaywalking. During the segment he would ask people in the street questions that were somewhat esoteric and to which he could expect to get unusual and generally humorous answers. On one show, on the 4th of July, he asked people “From what country did the United States declare independence on the 4th of July?” and of course no one knew the answer.

My first thought was that he must have gone through dozens of people to find the four or five people who did not know the answer to his question. The next day at work, the 5th of July, I decided to ask several people, all of whom were college graduates, the same question. I got not one single correct answer. Although, one person at least realized “I think I should know this”. When I told my wife, a retired teacher, she wasn’t surprised. For a long time, she had been concerned about the lack of emphasis on social studies and the arts in school curriculums. I was becoming seriously concerned about the direction of education in our country.

A lot of people are probably thinking “So what, who really cares what a bunch of dead people did 200 years ago?” If we don’t know what they did and why they did it how can we understand its relevance today? We have no way to judge what actions may support the best interests of society and what might ultimately be detrimental.

Failure to learn from and understand the past results in a me-centric view of everything. If you fail to understand how things have developed, then you certainly cannot understand what the best course is to go forward. Attempting to judge all people and events of the past through your own personal prejudices leads only to continued and worsening conflict.

If you study the past you will see that there has never general agreement on anything. There were many disagreements, debates and even a civil war over differences of opinion. It helps us to understand that there are no perfect people who always do everything the right way and at the right time. It helps us to appreciate the good that people do while understanding the human weaknesses that led to the things that we consider faults today. In other words, we cannot expect anyone to be a 100% perfect person. They may have accomplished many good and meaningful things and those good and meaningful things should not be discarded because the person was also a human being with human flaws.

Understanding the past does not mean approving of everything that occurred but it also means not condemning everything that does not fit into twenty-first century mores. Only by recognizing this and seeing what led to the disasters of the past can we hope to avoid repetition of the worst aspects of our history. History teaches lessons in compromise, involvement and understanding. Failure to recognize that leads to strident argument and an unwillingness to cooperate with those who may differ in even the slightest way. Rather than creating the hoped-for perfect society, it simply leads to a new set of problems and a new group of grievances.

In sum, failure to study history is a failure to prepare for the future. We owe it to ourselves and future generations to understand where we came from and how we can best prepare our country and the world for them. They deserve nothing less than a full understanding of the past and a rational way forward.

This was my first post after I started my blog in 2021. I believe it is even more relevant now.