When you think about the American Revolution, you probably picture dramatic battles like Bunker Hill or the crossing of the Delaware. But here’s something that might surprise you: the biggest killer during the war wasn’t British muskets—it was disease. And it’s not even close.

The Numbers Tell a Grim Story

Let’s talk numbers for a second. On the American side, about 6,800 soldiers died from battlefield wounds. Sounds terrible, right? Well, disease killed an estimated 17,000 to 20,000. That’s roughly three times as many. The British and their Hessian allies faced similar odds: around 7,000 combat deaths versus 15,000 to 25,000 disease deaths.

Think about that for a moment. You were actually safer charging into battle than hanging around camp. In some regiments, disease wiped out more than a third of the troops before they even saw their first fight.

Why Was Disease So Deadly?

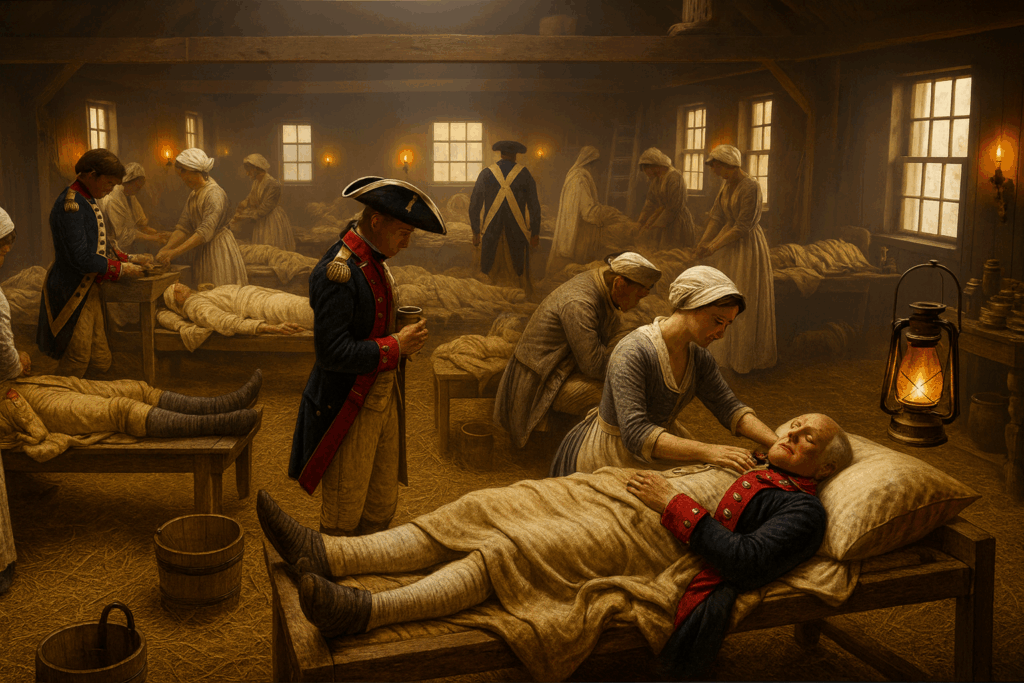

Picture yourself in a Revolutionary War military camp. Hundreds of men crammed together in makeshift shelters, no running water, primitive latrines dug too close to where everyone lives, and basically zero understanding of what we’d call “germ theory” today. It’s a perfect storm for infectious disease.

The big killers were:

Smallpox was the heavyweight champion of camp diseases. This virus killed about 30% of people it infected and spread like wildfire through packed military camps. Soldiers tried to protect themselves through a risky practice called inoculation—basically giving themselves a mild case of smallpox on purpose by rubbing infected pus into cuts on their skin. Without proper quarantine procedures, though, this sometimes made outbreaks worse instead of better.

Typhus (called “camp fever” back then) spread through lice and fleas. If you’ve ever been to a prolonged camping trip and felt gross after a few days, imagine that times a hundred. Soldiers lived in the same clothes for weeks, rarely bathed, and the parasites just had a field day. The fever, headaches, and diarrhea that came with typhus made it one of the most dreaded camp diseases.

Dysentery (charmingly nicknamed “bloody flux”) came from contaminated water and poor sanitation. When your latrine is 20 feet from your water source and you don’t understand how disease spreads, this becomes pretty much inevitable. The severe diarrhea weakened soldiers to the point where many couldn’t fight even if they wanted to and it made them even more susceptible to other diseases.

Malaria was especially important in the South, where mosquitoes thrived in the humid climate. This one actually played a fascinating role in how the war ended—but more on that in a bit.

When Disease Changed Everything

The 1776 invasion of Canada was a disaster largely because of smallpox. Out of 3,200 American soldiers in the Quebec campaign, 1,200 fell sick. You can’t mount much of an offensive when more than a third of your army is flat on their backs with fever. Similarly, during the siege of Boston, Washington couldn’t effectively engage the British because so many of his troops were sick with smallpox. These weren’t just setbacks—they were strategic catastrophes.

This is what pushed George Washington to make one of his boldest decisions in 1777: he ordered a mass inoculation of the Continental Army. This was controversial and dangerous at the time, but it worked. Washington had survived smallpox himself as a young man, so he understood both the risks and the benefits. The inoculation program probably saved the army from complete collapse.

Medical “Treatment” Was Often Worse Than Nothing

Here’s where things get really grim. Eighteenth-century medicine was basically medieval. Doctors believed in “balancing the humors” through bloodletting—literally draining blood from already weakened soldiers. They also gave powerful laxatives to people who were already suffering from diarrhea. Yeah, let that sink in.

Pain relief meant opium-based drinks or just straight alcohol. Some doctors used herbal remedies, but results were inconsistent at best. Quinine helped with malaria, though nobody really understood why. Mostly, if you got seriously sick, your survival came down to luck and a strong constitution.

Valley Forge: The Turning Point

Valley Forge is famous for being a brutal winter encampment, and disease was a huge part of why it was so terrible. Scabies left nearly half the troops unable to serve. Dysentery and camp fever killed somewhere between 1,700 and 2,000 soldiers during that single winter—and remember, these weren’t battle casualties. These men died from preventable diseases in what was supposed to be a safe encampment.

But Valley Forge taught the Continental Army a crucial lesson. After that nightmare winter, military leaders started taking sanitation seriously. They began focusing on camp hygiene, protecting water supplies, placing latrines away from living areas, and making sure soldiers could bathe and wash their clothes and bedding.

Baron von Steuben is famous for teaching the Continental Army how to march and drill, but he also deserves credit for implementing serious sanitation reforms. These changes helped prevent future disease outbreaks and kept the army functional for the rest of the war.

The Secret Weapon at Yorktown

Here’s one of my favorite historical details: mosquitoes may have helped win American independence. At Yorktown, roughly 30% of Cornwallis’s British army was knocked out by malaria and other diseases during the siege. The British commander was trying to hold off the American and French forces while also dealing with the fact that almost a third of his troops were too sick to fight.

Many American soldiers from the southern colonies had grown up with malaria and had some partial immunity. The British? Not so much. Some historians even think Cornwallis himself might have been suffering from malaria, which could have affected his decision-making. His second-in-command, Brigadier General Charles O’Hara, was definitely seriously ill during the siege. Fighting a war while you can barely stand is a pretty significant handicap.

The Bigger Picture

The American Revolution shows us something important: wars aren’t just won on battlefields. They’re won by the side that can keep its soldiers alive and healthy. Disease shaped strategic decisions, determined the outcomes of campaigns, and killed far more men than any British regiment ever did.

Washington’s decision to inoculate the army was genuinely revolutionary (pun intended). It showed a willingness to embrace controversial medical practices for the greater good. The sanitation reforms that came out of Valley Forge laid groundwork for modern military medicine and influenced public health policies in the new United States.

So next time someone mentions the American Revolution, remember: while we celebrate the military victories, one of the most important battles was fought against an enemy you couldn’t see—and for most of the war, nobody really knew how to fight it.

The casualty figures and major disease outbreaks are well-documented in historical records. The specific percentages and numbers are estimates based on historical research, as precise record-keeping was limited during this period. The overall narrative about disease being the primary cause of death is strongly supported by multiple historical sources.

How A Nobel Laureate Thinks We Can Save The American Economy…But It Won’t Be Easy

By John Turley

On October 19, 2025

In Commentary, Politics

I just finished People, Power, and Profits by Joseph Stiglitz — the Nobel Prize winning economist. He wrote this near the end of Trump’s first term, but honestly, the world he describes feels even more relevant now.

Stiglitz doesn’t sugarcoat it: capitalism, as we’re practicing it today, is broken. Monopolies dominate markets, inequality has gone wild, and trust in democracy is running on fumes. His proposed fix? Something he calls progressive capitalism — capitalism with guardrails, conscience, and a sense of fairness.

Stiglitz makes the case that our economic system is rigged — not by accident, but by design. Here are his most compelling arguments and what he thinks we should do about them.

1. Taxation and Rent-Seeking: The Rigged Game

Stiglitz draws a sharp distinction between making money through productive work and extracting it through what economists call “rent-seeking” – essentially, using power to skim wealth without creating value. Think of a pharmaceutical company that buys a drug patent and jacks up prices 5,000%, or telecom monopolies that divide up markets to avoid competing.

His argument is straightforward: our tax system rewards the wrong behavior. Capital gains are taxed at lower rates than wages, which means someone living off investments pays less than someone working a regular job. Meanwhile, the wealthy can afford armies of accountants to exploit loopholes that most people don’t even know exist.

What Stiglitz recommends: Tax wealth more aggressively, especially inherited wealth. Close the capital gains loophole. Tax rent-seeking activities heavily while reducing taxes on productive work and innovation. The goal isn’t just revenue – it’s changing incentives so that the path to riches runs through creating value, not extracting it.

2. Green Energy and the True Cost of Pollution

Here’s where Stiglitz gets into what economists call “externalities” – costs that businesses impose on society without paying for them. When a coal plant spews carbon into the atmosphere, we all pay through climate change and increased healthcare costs, but the plant’s balance sheet looks great.

Stiglitz argues this is fundamentally dishonest accounting. If we properly priced pollution and carbon emissions, green energy wouldn’t need subsidies to compete – fossil fuels would suddenly look much more expensive once you factor in their real costs to society.

His recommendation: Implement carbon pricing – either through a carbon tax or cap-and-trade system. Make polluters pay for the damage they cause. This isn’t about punishing business; it’s about honest accounting. Once prices reflect reality, the market will naturally shift toward cleaner energy because it’s actually cheaper when you account for all the costs.

3. Big Business and Big Banks: Concentration of Power

Stiglitz has been particularly vocal about how corporate consolidation hurts everyone except shareholders and executives. His critique of “too big to fail” is sharp. He argues that concentrated economic power — in tech, finance, and even agriculture — undermines both democracy and efficiency. When a few firms dominate markets, they can suppress wages, block innovation, and bend regulations in their favor—they gain power over prices, wages, and even politics.

The banking sector especially concerns him. After the 2008 financial crisis, which was caused largely by reckless behavior from major banks, these same institutions emerged even larger through government-facilitated mergers. We allowed them to spread their losses among their depositors but let them keep their gains as internal profits.

His recommendations: Reinstate and strengthen regulations that were stripped away, including bringing back something like the Glass-Steagall Act that separated commercial and investment banking. Break up banks that are “too big to fail.” Strengthen antitrust enforcement across all industries. Use the government’s regulatory power to promote competition rather than letting industry consolidate.

4. Money in Politics: The Feedback Loop

This is where everything connects for Stiglitz. Concentrated economic power translates directly into political power. Wealthy interests fund campaigns, lobby relentlessly, and effectively write regulations for the agencies that are supposed to oversee them. This creates a vicious cycle: economic inequality begets political inequality, which creates policies that worsen economic inequality.

Stiglitz argues that the Supreme Court’s Citizens United decision, which allowed unlimited corporate spending in elections, turbocharged this problem by treating money as speech and corporations as people.

His recommendations: Limit campaign spending and institute public financing of campaigns to reduce candidates’ dependence on wealthy donors. Place strict limits on lobbying and implement a robust “revolving door” policy that prevents government officials from immediately cashing in with the industries they regulated. Mandate transparency requirements so voters know who’s funding what. Pass Constitutional amendments if necessary to overturn Citizens United.

The Big Picture

What makes Stiglitz’s argument powerful is how these pieces fit together. You can’t fix inequality just through taxation if big corporations control the political process. You can’t address climate change if fossil fuel companies can buy enough influence to block action. Everything is connected.

His recommendations aren’t radical in historical terms – they’re actually trying to restore a balance that existed during the post-war economic boom of the 1950s. Stiglitz’s “progressive capitalism” isn’t socialism. It’s capitalism with a conscience — one that remembers who it’s supposed to serve.

Whether you see that as a rescue plan or a recipe for red tape depends entirely on where you put your faith: in public institutions or private markets. The question is do we have the political will to implement his recommendation despite entrenched opposition from those benefiting from the current system?

Either way, this debate isn’t going away — it’s the one shaping the 21st-century economy.