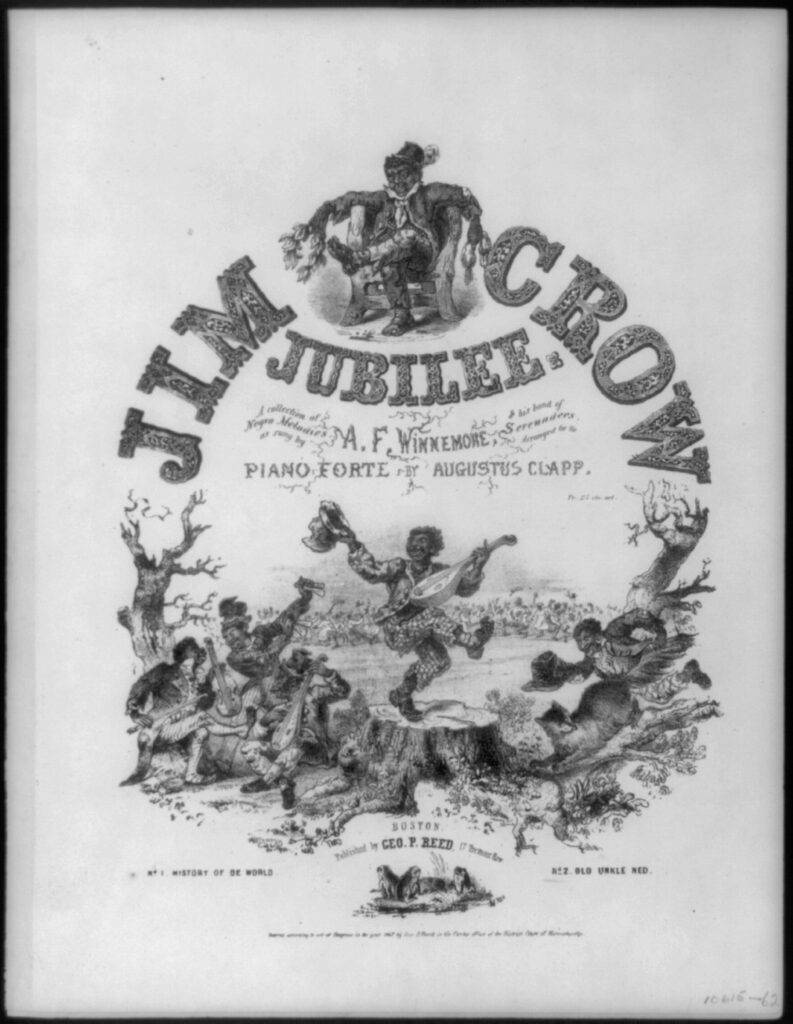

Source: Library of Congress via Wikimedia Commons, public domain

Few phrases in American history carry as much weight as Jim Crow. It is shorthand for a century of systematic racial oppression — separate schools, separate water fountains, disenfranchisement and even terror. But the term itself has an origin that is both surprising and telling: it began not in a courthouse or a legislature, but on a minstrel stage in the 1820s, as the punchline of a song-and-dance act performed by a white man in blackface.

Jump Jim Crow: The Birth of a Slur

The story starts with Thomas Dartmouth “Daddy” Rice, a struggling white actor from the Lower East Side of Manhattan who would go on to become one of the most famous entertainers of his era. Around 1828, Rice developed a stage character he called Jim Crow — a singing, dancing, shuffling caricature of a Black man, performed in blackface makeup made from burnt cork and wearing ragged clothes. The precise inspiration for the character is disputed and has, as historians put it, been lost to legend. Rice claimed to have based it on a disabled enslaved stableman he observed singing and dancing to amuse himself, though the time, place, and truth of this claim have never been verified.

What is not disputed is the impact. Rice’s “Jump Jim Crow” routine was a sensation. By 1832 he had turned the character into his signature act, touring from Louisville to Cincinnati to Philadelphia to New York, playing to sold-out houses. He eventually took the show to London and Dublin, where audiences were equally enthralled. Rice became known as “Jim Crow Rice,” the self-proclaimed “Father of American Minstrelsy.”

The character Rice created was deliberately degrading — a dim-witted, lazy, buffoonish caricature built to confirm the worst racial stereotypes white audiences held about Black Americans and to reinforce a racial hierarchy that portrayed Black people as naturally suited to servitude and exclusion from the rights of citizenship. He and his imitators spread these images across the country. By 1838, the term “Jim Crow” had crossed from the stage into everyday speech as a collective racial slur for Black people. The minstrel show had given American racism a catchy name.

It is worth pausing on the cultural mechanics of what minstrelsy did. It was not just entertainment — it was propaganda. Rice and his many imitators, including the wildly popular Christy Minstrels (for whom Stephen Foster wrote songs), systematically presented Black Americans as subhuman, unworthy of social participation, and fundamentally inferior and ridiculous figures. These depictions played to a stereotype that reinforced racial prejudice among white audiences and softened their moral resistance to segregation and violence long before those policies were ever written into law.

Reconstruction and Its Violent Undoing

The Civil War ended in 1865 with the abolition of slavery, and the Reconstruction era that followed was a genuine, if incomplete, experiment in racial equality. The 13th, 14th, and 15th Amendments abolished slavery, granted citizenship, and extended voting rights to Black men. For a brief window, Black Americans served in state legislatures and held federal office across the South.

That window slammed shut in 1877, when President Rutherford B. Hayes withdrew the last federal troops from the South as part of a political compromise. Southern white Democrats — calling themselves “Redeemers” — systematically retook their state legislatures using violence, voter fraud, and paramilitary terror groups like the Ku Klux Klan, the White League, and the Red Shirts. Republican officeholders were run out of town or worse. Black voters were lynched as a warning to others.

Once back in power, Southern legislatures began passing the laws that would become known as Jim Crow — a name borrowed directly from Rice’s minstrel slur. The term had traveled from the stage to common speech and then into the legal codebook and reflected the same racist assumptions embodied in the minstrel character: that Black Americans were inherently inferior and should occupy a subordinate social and legal position.

Beginning with Florida in 1887, states required railroads to maintain separate cars for Black and white passengers. Similar laws quickly spread segregation to schools, restaurants, hospitals, theaters, cemeteries, parks, waiting rooms, and virtually every other point of public contact between the races. Many facilities were marked with signs reading “White” and “Colored” to emphasize the exclusion of Black citizens. The laws varied by state but shared a common goal—racial separation and political exclusion.

Plessy v. Ferguson: The Supreme Court Blesses Segregation

In 1892, a group of Black and mixed-race citizens in New Orleans organized a deliberate legal challenge to Louisiana’s Separate Car Act. They recruited Homer Plessy — a man who was seven-eighths white but legally classified as Black under Louisiana law — to board a whites-only train car and refuse to move. He was arrested on cue, and his case wound its way through the court system to the United States Supreme Court.

In Plessy v. Ferguson (1896), the Court ruled 7-1 that racial segregation was constitutional as long as the separate facilities were nominally equal. The lone dissenter, Justice John Harlan, stated that the constitution should be color blind and warned prophetically that the decision would prove as pernicious as the Dred Scott ruling. He was right. The “separate but equal” doctrine became the legal foundation for a total apartheid system across the South — and, in many respects, well beyond it.

In practice, of course, the facilities were never equal. Black schools were chronically underfunded, with inferior buildings, used textbooks, and far lower teacher salaries. Black hospitals, where they existed at all, were understaffed and under-equipped. “Separate but equal” was a legal fiction that everyone understood as a polite cover for second-class citizenship.

Between 1890 and 1910, ten of the eleven former Confederate states rewrote constitutions or passed amendments to systematically disenfranchise Black voters through poll taxes, literacy tests administered by white registrars, grandfather clauses, and outright violent intimidation. African Americans who had briefly held political power during Reconstruction effectively disappeared from public life.

The Long Arm of Jim Crow: Life Under the System

For the better part of a century, Jim Crow was not merely a set of legal rules — it was a total social order enforced by custom, economic pressure, and the ever-present threat of violence. A Black man who looked a white man in the eye too long, or failed to step off the sidewalk, or was accused of anything at all, could find himself facing a lynch mob. Between Reconstruction and World War II, thousands of Black Americans — men, women and even children — were lynched, often publicly and festively, with onlookers smiling while posing for pictures with the victim. Law enforcement often did nothing and at times even actively participated.

The Great Migration — the mass movement of Black Americans from the South to Northern and Midwestern cities beginning around 1910 — was driven in large part by Jim Crow. Millions fled to Chicago, Detroit, New York, and Los Angeles in search of something approaching equal treatment. What they often found instead was a northern variant: informal segregation through redlining (systematic denial of mortgages in Black neighborhoods), discriminatory hiring, and residential segregation enforced by real estate covenants and social pressure.

The Legal Dismantling: Brown, the Civil Rights Act, and the Voting Rights Act

The legal edifice of Jim Crow began to crack in 1954, when the Supreme Court issued its unanimous decision in Brown v. Board of Education. Directly overturning Plessy, the Court held that segregated schools were inherently unequal and unconstitutional. The ruling did not end segregation — Southern states resisted fiercely, and desegregation proceeded at a glacial pace well into the 1970s — but Brown pulled the legal rug out from under “separate but equal.”

The civil rights movement of the late 1950s and early 1960s — marked by sit-ins, freedom rides, the Birmingham campaign, the March on Washington, and countless acts of individual courage in the face of brutal repression — built the political pressure that forced legislative action. The Civil Rights Act of 1964 prohibited discrimination in public accommodations and employment. The Voting Rights Act of 1965 dismantled the machinery of Black disenfranchisement in the South, sending federal examiners into states with histories of discrimination to ensure that Black citizens could actually register and vote. By 1965 the majority of the formal Jim Crow laws had been overturned.

The Vestiges: Jim Crow’s Long Shadow

Ending Jim Crow legally is not the same as ending its consequences — or, as some argue, its functional successors. Legal scholar Michelle Alexander, in her landmark 2010 book The New Jim Crow, argues that mass incarceration has emerged as a new system of racialized social control. Black Americans are incarcerated at roughly five times the rate of white Americans. A felony conviction — often stemming from drug offenses prosecuted far more aggressively in Black communities despite similar rates of drug use across races — carries a cascade of legal disabilities: loss of voting rights in most states, exclusion from public housing and many jobs, and ineligibility for certain federal benefits. Nearly 30% of Black men carry felony records.

The racial wealth gap stands as perhaps the most stubborn vestige of a century of legal exclusion. The median Black family holds roughly one-tenth the wealth of the median white family — a gap driven not by individual choices but by generations of legally enforced exclusion from homeownership, inheritance, and wealth-building opportunities. Redlining — the systematic denial of mortgages in Black neighborhoods by federally-backed banks — was official federal policy from the 1930s through the 1960s and left neighborhood segregation patterns that persist visibly today.

Voting rights remain contested territory. The Supreme Court’s 2013 ruling in Shelby County v. Holder gutted the preclearance requirement of the Voting Rights Act, which had required states with histories of discrimination to get federal approval before changing election laws. Since then, a wave of new restrictions — voter ID laws, aggressive voter roll purges, reduced polling locations, and aggressive felony disenfranchisement — has fallen disproportionately on Black, Latino, and Indigenous voters. As of April 2024, 19 states require photo identification to vote, a requirement critics note functions as a modern poll tax for those who lack access to the required documents.

Residential segregation, too, endures. American neighborhoods and, by extension, their schools remain largely segregated by race — a product not of individual choice but of policies like redlining, racially restrictive housing covenants, and “white flight” that were actively promoted by governments at every level. These patterns mean that school quality, which is largely tied to local property tax revenues, continues to diverge sharply by race.

Conclusion

The arc of Jim Crow runs from the absurd to the tragic: from a white comedian in burnt-cork makeup doing a shuffling dance routine, to a legal apparatus that stripped millions of Americans of their dignity, their rights, and their lives for a century. The name itself tells you what the architects of segregation thought of their project — it was always a contemptuous slap in the face of Black Americans.

While the formal system was legally dismantled between 1954 and 1965 — which is rightly understood as a profound achievement — the legal end of Jim Crow is not the same as justice, and it is not the same as equality. The wealth gap, the incarceration gap, the voting access gap, and the education gap all bear the fingerprints of a system that successfully kept Black Americans subordinate for generations.

Understanding Jim Crow fully requires tracing it from its shameful origins in a minstrel song, through its deadly flowering as a legal regime, to its present-day aftereffects. The name may have disappeared from the law books, but the consequences have not and some critics of the current administration see the dismantling of DEI as an indirect form of Jim Crow revival or neo-segregation. They believe that race-neutral language is being used to reproduce racial inequality without openly naming race. This is sometimes described this as “Jim Crow 2.0” or “colorblind Jim Crow” and should be no more acceptable than its more blatant ancestor.

Sources

1. Wikipedia — Thomas D. Rice: https://en.wikipedia.org/wiki/Thomas_D._Rice

2. Wikipedia — Jim Crow (Character): https://en.wikipedia.org/wiki/Jim_Crow_(character)

3. Wikipedia — Jump Jim Crow: https://en.wikipedia.org/wiki/Jump_Jim_Crow

4. Jim Crow Museum, Ferris State University — Who Was Jim Crow?: https://jimcrowmuseum.ferris.edu/who/index.htm

5. Jim Crow Museum, Ferris State University — The Origins of Jim Crow: https://jimcrowmuseum.ferris.edu/origins.htm

6. Britannica — What Is the Origin of the Term “Jim Crow”?: https://www.britannica.com/story/what-is-the-origin-of-the-term-jim-crow

7. Britannica — Jim Crow Law: https://www.britannica.com/event/Jim-Crow-law

8. National Archives — Plessy v. Ferguson (1896): https://www.archives.gov/milestone-documents/plessy-v-ferguson

9. Wikipedia — Plessy v. Ferguson: https://en.wikipedia.org/wiki/Plessy_v._Ferguson

10. Wikipedia — Jim Crow Laws: https://en.wikipedia.org/wiki/Jim_Crow_laws

11. PBS — Jim Crow & Plessy v. Ferguson: https://www.pbs.org/tpt/slavery-by-another-name/themes/jim-crow/

12. Howard University School of Law — Reconstruction and Jim Crow Eras: https://library.law.howard.edu/civilrightshistory/blackrights/jimcrow

13. American Battlefield Trust — The Jim Crow Era: https://www.battlefields.org/learn/articles/jim-crow-era

14. Michelle Alexander — The New Jim Crow (Introduction Excerpt): https://newjimcrow.com/about/excerpt-from-the-introduction

15. Economic Policy Institute — Voter Suppression Rooted in Racism: https://www.epi.org/publication/rooted-racism-voter-suppression/

16. American Academy of Arts and Sciences — Somewhere Between Jim Crow and Post-Racialism: https://www.amacad.org/publication/daedalus/somewhere-between-jim-crow-post-racialism

17. Yale Macmillan — History of Minstrel Shows and Jim Crow: https://macmillan.yale.edu/glc/history-minstrel-shows-and-jim-crow

The Marble Statue Problem: Why Half the Story Is No Story at All

By John Turley

On March 12, 2026

In Commentary, History, Politics

A Commentary on Selective American History

There is a version of American history that looks spectacular. Founding Fathers on horseback, industrialists building steel empires from nothing, pioneers pushing west into open lands. It is the kind of history that gets carved into marble, hoisted onto pedestals, and taught as national mythology. Clean. Inspiring. Incomplete. And right now, there is a visible push by some politicians, curriculum reformers, and commentators to make that marble-statue version the only version — to scrub away what one American Historical Association report called the “inconvenient” truths that complicate the picture. What we lose in that scrubbing is not just accuracy. We lose the full human story of this country, and with it, the lessons that might be useful today.

The selective telling is not new, but its current form has new energy. In recent years, legislation has been introduced across multiple states to restrict how teachers discuss slavery, Indigenous displacement, immigration history, and the treatment of women and the poor. The argument is usually dressed up as national unity and pride. But the practical effect is something else: a history curriculum where triumph and innovation are permissible but suffering and exploitation are edited out.

Historians surveying American teachers in 2024 found this impulse reflected in the classroom as well — students arriving with what teachers described as a “marble statues” version of history absorbed from earlier grades, one that freezes the Founders and other heroes in idealized civic memory, stripped of contradiction. The pitch is usually framed as morale: kids need pride and self esteem, not “division.” But the practical effect is a kind of historical editing that turns real people—enslaved Americans, Native communities, women, immigrants, and the poor—into background scenery rather than participants with agency, suffering, and claims on the national memory.

You can see the argument playing out in education policy and curriculum fights. The “patriotic education” push associated with the federal 1776 Commission is a clear example: it cast some approaches to teaching slavery and racism as inherently “anti-American,” and it encouraged a narrative that stresses national ideals while softening the lived realities that contradicted those ideals.

Historians’ organizations have answered back that this kind of narrowing doesn’t create unity so much as it creates amnesia. At the state level, controversies over how to describe or contextualize slavery—down to euphemisms and selective framing—keep resurfacing, because controlling the vocabulary controls the moral takeaway. Florida’s education standards went so far as to compare slavery with job training.

The tension between celebratory and critical history also appears in how we interpret national symbols. The Statue of Liberty, now widely read as a welcoming beacon for immigrants, was originally conceived in significant part as a commemoration of the end of slavery in the United States and of the nation’s centennial. Over time, its antislavery meaning was overshadowed by a more comfortable story about voluntary immigration and opportunity as official imagery and public campaigns recast the statue to fit new national needs. This shift did not merely “add” an interpretation; it obscured the connection between American liberty and Black emancipation, pushing aside the reality that millions arrived in chains rather than by choice.

The deeper problem isn’t that Americans disagree about the past—healthy societies argue about meaning all the time. The problem is when disagreement becomes a one-way ratchet: complexity gets labeled “bias,” and only a feel-good storyline qualifies as “neutral.” That’s not neutral. That’s a choice to privilege certain experiences as representative and treat others as “inconvenient.”

Nowhere does this distortion show up more clearly than in how Americans tend to celebrate the industrialists of the late 19th and early 20th centuries — the Gilded Age titans who built railroads, steel mills, and oil empires. Andrew Carnegie, John D. Rockefeller, J.P. Morgan, Cornelius Vanderbilt: these men are frequently held up as models of American ambition and ingenuity, visionaries who transformed a post-Civil War nation into the world’s dominant industrial power. And they did do that. But the marble-statue version stops there, and stopping there is where the dishonesty begins.

Look at what powered that industrial machine: coal. And look at who powered coal. The men — and children — who went underground every day to dig it out of the earth under conditions that were, by any modern standard, a form of institutionalized violence. Between 1880 and 1923, more than 70,000 coal miners died on the job in the United States. That is not a rounding error; it is a small city’s worth of human lives, consumed by an industry that knew the dangers and chose profits over protection. Cave-ins, gas explosions, machinery accidents, and the slow suffocation of black lung took miners in ones and twos on ordinary days, and in mass casualties during what miners grimly called “explosion season” — when dry winter air made methane and coal dust especially volatile. Three major mine disasters in the first decade of the 1900s killed 201, 362, and 239 miners respectively, the latter two occurring within two weeks of each other.

And those were the adults. In the anthracite coal fields of Pennsylvania alone, an estimated 20,000 boys were working as “breaker boys” in 1880 — children as young as eight years old, perched above chutes and conveyor belts for ten hours a day, six days a week, picking slate and impurities out of rushing coal with bare hands. The coal dust was so thick at times it obscured their view. Photographer Lewis Hine documented these children in the early 1900s specifically because he understood that seeing them — their coal-blackened faces, their missing fingers, their flat eyes — was the only way to make comfortable Americans confront the total cost of the industrial miracle. Pennsylvania passed a law in 1885 banning children under twelve from working in coal breakers. The law was routinely ignored; employers forged age documents and desperate families went along with it because the wages, however meager, kept families from starving.

Coal mining is a representative case study because the work was both essential and punishing, and because the labor conflicts were not metaphorical—they were sometimes literally armed. In the coalfields, many miners lived in company towns where the company controlled the housing and the local economy. Some workers were paid in “scrip” redeemable only at the company store, a system that locked families into dependency and debt. When union organizing surged, the backlash could be violent. West Virginia’s Mine Wars culminated in the Battle of Blair Mountain in 1921—widely described as the largest labor uprising in U.S. history—where thousands of miners confronted company-aligned forces and state power. The mine owners deployed heavy machine guns and hired private pilots to drop arial bombs on the miners.

If you zoom out, this pattern wasn’t limited to coal. The Triangle Shirtwaist Factory fire in 1911 became infamous partly because locked doors and poor safety practices trapped workers—mostly young immigrant women—leading to 146 deaths in minutes.

When workers tried to organize for better pay and safer conditions, the response from the industrialists and their allies was not negotiation. It was force. Henry Clay Frick, chairman at Carnegie Steel, cut worker wages in half while increasing shifts to twelve hours, then hired the Pinkerton Detective Agency — effectively a private army — to break the strike that followed at Homestead, PA in 1892. During the Great Railroad Strike of 1877, when workers walked off the job across the country, state militias were called in. In Maryland, militia fired into a crowd of strikers, killing eleven. In Pittsburgh, twenty more were killed with bayonets and rifle fire. A railroad executive of the era, asked about hungry striking workers, reportedly suggested they be given “a rifle diet for a few days” to see how they liked it. Throughout this period the federal government largely sided with capital against labor.

This is the part of the story that the marble-statue version leaves out — and not because it is marginal. The labor movement that emerged from these battles shaped virtually every protection American workers have today: the eight-hour workday, child labor laws, workplace safety regulations, the right to organize. These were not gifts handed down by generous industrialists. They were won through strikes, suffering, and in some cases, death. Ignoring that history does not honor the industrialists. It dishonors the workers.

The same pattern runs through every thread of American history that is currently under pressure. The story of westward expansion is incomplete without the story of Native displacement and the deliberate destruction of Indigenous cultures. The story of American agriculture is incomplete without the story of enslaved labor and the systems of racial control that followed emancipation. The story of American prosperity is incomplete without the story of immigrant communities channeled into the most dangerous, lowest-paid work and then told to be grateful for the opportunity. Women’s history, for most of American history, was not considered history at all. In each case, leaving out the difficult chapter does not produce a cleaner story. It produces a false one.

The argument for the marble-statue version is usually that complexity is demoralizing — that children need heroes, that citizens need pride, that a nation cannot function if it is constantly relitigating its worst moments. There is something in that concern worth taking seriously. History taught purely as a catalog of grievances is not good history either. But the answer to that problem is not to swap one distortion for another. Good history holds both: the genuine achievement and the genuine cost. Mark Twain understood this when he coined “The Gilded Age” — a title that means literally covered in a thin layer of gold over something much cheaper underneath. That phrase has been in the American vocabulary for 150 years because it captures something true about how surfaces can deceive.

A country that cannot look honestly at its own history is a country that will keep repeating the parts it refuses to examine. The enslaved deserve to be in the story. Indigenous people deserve to be in the story. Women deserve to be in the story. The breaker boys deserve to be in the story. The miners killed by the thousands deserve to be in the story. The workers shot by militias while asking for a living wage deserve to be in the story. Not because the story should only be about suffering, but because they were there — and because understanding what they faced, and what they fought for, and what they eventually changed, is how the story makes sense.

Illustration generated by author using ChatGPT.

Sources

American Historical Association. “American Lesson Plan: Curricular Content.” 2024.

https://www.historians.org/teaching-learning/k-12-education/american-lesson-plan/curricular-content/

Brewminate. “Replaceable Lives and Labor Abuse in the Gilded Age: Labor Exploitation and the Human Cost in America’s Gilded Age.” 2026.

https://brewminate.com/replaceable-lives-and-labor-abuse-in-the-gilded-age/

Bureau of Labor Statistics. “History of Child Labor in the United States, Part 1.” 2017.

https://www.bls.gov/opub/mlr/2017/article/history-of-child-labor-in-the-united-states-part-1.htm

Energy History Project, Yale University. “Coal Mining and Labor Conflict.”

https://energyhistory.yale.edu/coal-mining-and-labor-conflict/

Hannah-Jones, Nikole, et al. “A Brief History of Slavery That You Didn’t Learn in School.” New York Times Magazine. 2019.

https://www.nytimes.com/interactive/2019/08/14/magazine/slavery-capitalism.html

Investopedia. “The Gilded Age Explained: An Era of Wealth and Inequality.” 2025.

https://www.investopedia.com/terms/g/gilded-age.asp

MLPP Pressbooks. “Gilded Age Labor Conflict.”

https://mlpp.pressbooks.pub/ushistory2/chapter/chapter-1/

Princeton School of Public and International Affairs. “Princeton SPIA Faculty Reflect on America’s Past as 250th Anniversary Approaches.” 2026.

https://spia.princeton.edu/

USA Today. “Millions of Native People Were Enslaved in the Americas. Their Story Is Rarely Told.” 2025.

https://www.usatoday.com/

Wikipedia. “Breaker Boy.”

https://en.wikipedia.org/wiki/Breaker_boy

Wikipedia. “Robber Baron (Industrialist).”

https://en.wikipedia.org/wiki/Robber_baron_(industrialist)

America250 (U.S. Semiquincentennial Commission). “America250: The United States Semiquincentennial.”

https://www.america250.org/

Bunk History (citing Washington Post reporting). “The Statue of Liberty Was Created to Celebrate Freed Slaves, Not Immigrants.”

https://www.bunkhistory.org/

Upworthy. “The Statue of Liberty Is a Symbol of Welcoming Immigrants. That’s Not What She Was Originally Meant to Be.” 2026.

https://www.upworthy.com/